Associate Professor (part-time) at the Department of Computer Science of the University of Copenhagen (Denmark)

Contact (University of Münster)

- Room 233, Leonardo-Campus 3, 48147 Münster, Germany

- +49 251 8338151

- Universitetsparken 1, DK-2100 Copenhagen, Denmark

Bio

- Full Professor (W3), Department of Information Systems, University of Münster (Germany), 2020-today.

- Associate Professor (part-time), Department of Computer Science, University of Copenhagen (Denmark), 2021-today.

- Assistant Professor (Tenure-Track), Department of Computer Science, University of Copenhagen (Denmark), 2016-2021.

- Postdoctoral Researcher, Institute for Computing and Information Sciences (iCIS), Radboud University Nijmegen (Netherlands), 2014-2016 (supported by the Raboud Excellence Initiative).

- Postdoctoral Researcher, Department of Computer Science, University of Copenhagen (Denmark), 2013-2014 (supported by the German Academic Exchange Service).

- Postdoctoral Researcher, Department of Computer Science, University of Oldenburg (Germany), 2012-2013.

- Doctoral Degree (Dr. rer. nat.), Department of Computer Science, University of Oldenburg, Germany, 2006-2012.

- Diploma in Mathematics (with distinction), University of Münster, Germany, 2001-2006.

- Diploma in Computer Science, University of Münster, Germany, 2001-2006.

Research Interests

- Efficient Large-Scale Machine Learning

- Machine Learning for Resource-Constrained Devices

- High-Performance Computing

- Database Systems and Machine Learning

- Remote Sensing

- Energy Systems

Publications

- K. Schrödter, J. Stenkamp, N. Herrmann, and F. GiesekeCPAL26 Third Conference on Parsimony and Learning (CPAL) 2026

The growing demand for machine learning applications in the context of the Internet of Things calls for new approaches to optimize the use of limited compute and memory resources. Despite significant progress that has been made w.r.t. reducing model sizes and improving efficiency, many applications still require remote servers to provide the required resources. However, such approaches rely on transmitting data from edge devices to remote servers, which may not always be feasible due to bandwidth, latency, or energy constraints. We propose a task-specific, trainable feature quantization layer that compresses the input features of a neural network. This can significantly reduce the amount of data that needs to be transferred from the device to a remote server. In particular, the layer allows each input feature to be quantized to a user-defined number of bits, enabling a simple on-device compression at the time of data collection. The layer is designed to approximate step functions with sigmoids, enabling trainable quantization thresholds. By concatenating outputs from multiple sigmoids, introduced as bitwise soft quantization, it achieves trainable quantized values when integrated with a neural network. We compare our method to full-precision inference as well as to several quantization baselines. Experiments show that our approach outperforms standard quantization methods, while maintaining accuracy levels close to those of full-precision models. In particular, depending on the dataset at hand, compression factors of to can be achieved without significant performance loss.

@inproceedings{schroedter2026bitwisequant, title = {Trainable Bitwise Soft Quantization for Input Feature Compression}, author = {Schrödter, Karsten and Stenkamp, Jan and Herrmann, Nina and Gieseke, Fabian}, booktitle = {Third Conference on Parsimony and Learning (CPAL)}, year = {2026}, tags = {ml,application,rs}, projects = {ai4forest} } - J. Stenkamp, M. Hunke, C. Karatas, S. Kirchhoff, C. Knaden, P. Naebers, L. Zhao, B. Karic, F. Gieseke, and N. HerrmannSENSYS26 ACM/IEEE International Conference on Embedded Artificial Intelligence and Sensing Systems (SenSys) 2026

As cities strive to reduce car dependency and promote sustainable transportation, encouraging bicycle usage becomes a vital part of the urban planning process. The existence of a sufficient number of bicycle storage facilities is a key building block, as it reduces the likelihood of bicycle theft and the necessity for bicycle repairs. By monitoring the utilization of bicycle parking lots, supply shortfalls can be detected, and users can be informed about the availability of slots. However, detection systems face multiple challenges. Equipping every parking slot with individual sensors is costly, and transmitting visual data can raise privacy concerns or even discourage users. To address this problem, embedded machine learning can be used to process visual data locally and transmit only the resulting count to a central server. This work sets out a real-world use case for microcontrollers that are equipped with a camera and an embedded machine learning model for the purpose of counting parked bicycles. A custom dataset was collected and labeled to train an object-detection model, which was subsequently compressed and deployed on an ESP32-S3 microcontroller that processes the image data locally and transmits only the bicycle count to a remote server via LoRaWAN. The model compression incurs only a marginal performance degradation, with the compressed model still achieving an AP@50 of 0.91. Hence, our approach demonstrates the practical realization of recent theoretical advances in tiny machine learning and provides a viable solution for monitoring bicycle parking facilities in real-world settings.

@inproceedings{Stenkampcountingparkedbicycles, title = {Counting Parked Bicycles on the Edge - A TinyML Smart City Application}, author = {Stenkamp, Jan and Hunke, Mathis and Karatas, Cem and Kirchhoff, Steffen and Knaden, Christoph and Naebers, Paul and Zhao, Lige and Karic, Benjamin and Gieseke, Fabian and Herrmann, Nina}, booktitle = {ACM/IEEE International Conference on Embedded Artificial Intelligence and Sensing Systems (SenSys)}, year = {2026}, tags = {ml,application,rs}, projects = {tinyaiot} } - N. Herrmann, J. Stenkamp, B. Karic, S. Oehmcke, and F. GiesekeICLR26 The Fourteenth International Conference on Learning Representations (ICLR) 2026

Deploying machine learning models on compute-constrained devices has become a key building block of modern IoT applications. In this work, we present a compression scheme for boosted decision trees, addressing the growing need for lightweight machine learning models. Specifically, we provide techniques for training compact boosted decision tree ensembles that exhibit a reduced memory footprint by rewarding, among other things, the reuse of features and thresholds during training. Our experimental evaluation shows that models achieved the same performance with a compression ratio of 4–16x compared to LightGBM models using an adapted training process and an alternative memory layout. Once deployed, the corresponding IoT devices can operate independently of constant communication or external energy supply, and, thus, autonomously, requiring only minimal computing power and energy. This capability opens the door to a wide range of IoT applications, including remote monitoring, edge analytics, and real-time decision making in isolated or power-limited environments.

@inproceedings{treesonadiet, title = {Boosted Trees on a Diet: Compact Models for Resource-Constrained Devices}, author = {Herrmann, Nina and Stenkamp, Jan and Karic, Benjamin and Oehmcke, Stefan and Gieseke, Fabian}, booktitle = {The Fourteenth International Conference on Learning Representations (ICLR)}, year = {2026}, tags = {ml,de,energy,rs}, projects = {tinyaiot}, url = {https://openreview.net/forum?id=batDcksZsh} } - K. Schrödter, J. Pauls, and F. GiesekeAISTATS26 Twenty-Ninth Annual Conference on Artificial Intelligence and Statistics (AISTATS) 2026

Accurate tree height estimation is vital for ecological monitoring and biomass assessment. We apply quantile regression to existing tree height estimation models based on satellite data to incorporate uncertainty quantification. Most current approaches on tree height estimation rely on point predictions, which limits their applicability in risk-sensitive scenarios. In this work, we show that with minor modifications to the prediction head, existing models can be adapted to provide statistically calibrated uncertainty estimates via quantile regression. Furthermore, we demonstrate how our results correlate with known challenges in remote sensing (e.g., terrain complexity, vegetation heterogeneity), indicating that the model is less confident in more challenging conditions.

@inproceedings{schroedter2026uncertaintytree, title = {Canopy Tree Height Estimation using Quantile Regression: Modeling and Evaluating Uncertainty in Remote Sensing}, author = {Schrödter, Karsten and Pauls, Jan and Gieseke, Fabian}, booktitle = {Twenty-Ninth Annual Conference on Artificial Intelligence and Statistics (AISTATS)}, year = {2026}, tags = {ml,application,rs}, projects = {ai4forest} } - I. Fayad, M. Zimmer, M. Schwartz, P. Ciais, F. Gieseke, G. Belouze, S. Brood, A. De Truchis, and A. d’AspremontICML25 42nd International Conference on Machine Learning (ICML) 2025

Significant efforts have been directed towards adapting self-supervised multimodal learning for Earth observation applications. However, existing methods produce coarse patch-sized embeddings, limiting their effectiveness and integration with other modalities like LiDAR. To close this gap, we present DUNIA, an approach to learn pixel-sized embeddings through cross-modal alignment between images and full-waveform LiDAR data. As the model is trained in a contrastive manner, the embeddings can be directly leveraged in the context of a variety of environmental monitoring tasks in a zero-shot setting. In our experiments, we demonstrate the effectiveness of the embeddings for seven such tasks (canopy height mapping, fractional canopy cover, land cover mapping, tree species identification, plant area index, crop type classification, and per-pixel waveform-based vertical structure mapping). The results show that the embeddings, along with zero-shot classifiers, often outperform specialized supervised models, even in low data regimes. In the fine-tuning setting, we show strong low-shot capabilities with performances near or better than state-of-the-art on five out of six tasks.

@inproceedings{fayad2025dunia, title = {DUNIA: Pixel-Sized Embeddings via Cross-Modal Alignment for Earth Observation Applications}, author = {Fayad, Ibrahim and Zimmer, Max and Schwartz, Martin and Ciais, Philippe and Gieseke, Fabian and Belouze, Gabriel and Brood, Sarah and De Truchis, Aurelien and d'Aspremont, Alexandre}, booktitle = {42nd International Conference on Machine Learning (ICML)}, year = {2025}, tags = {ml,application}, projects = {ai4forest} } - J. Pauls, M. Zimmer, B. Turan, S. Saatchi, P. Ciais, S. Pokutta, and F. GiesekeICML25 42nd International Conference on Machine Learning (ICML) 2025

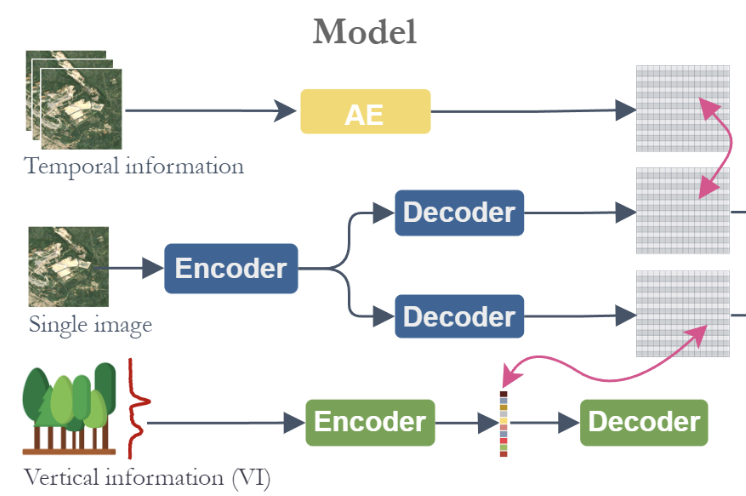

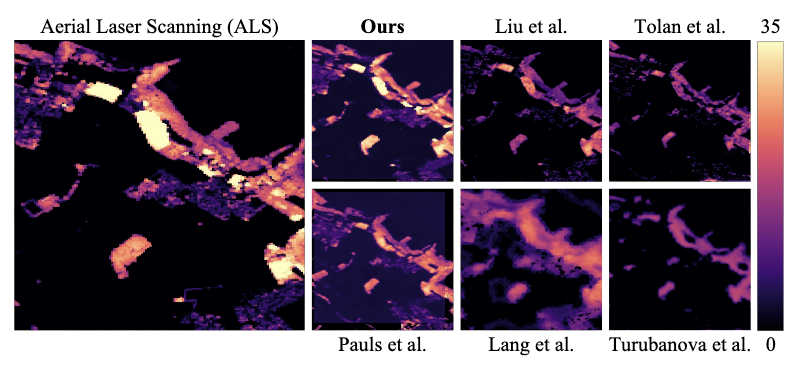

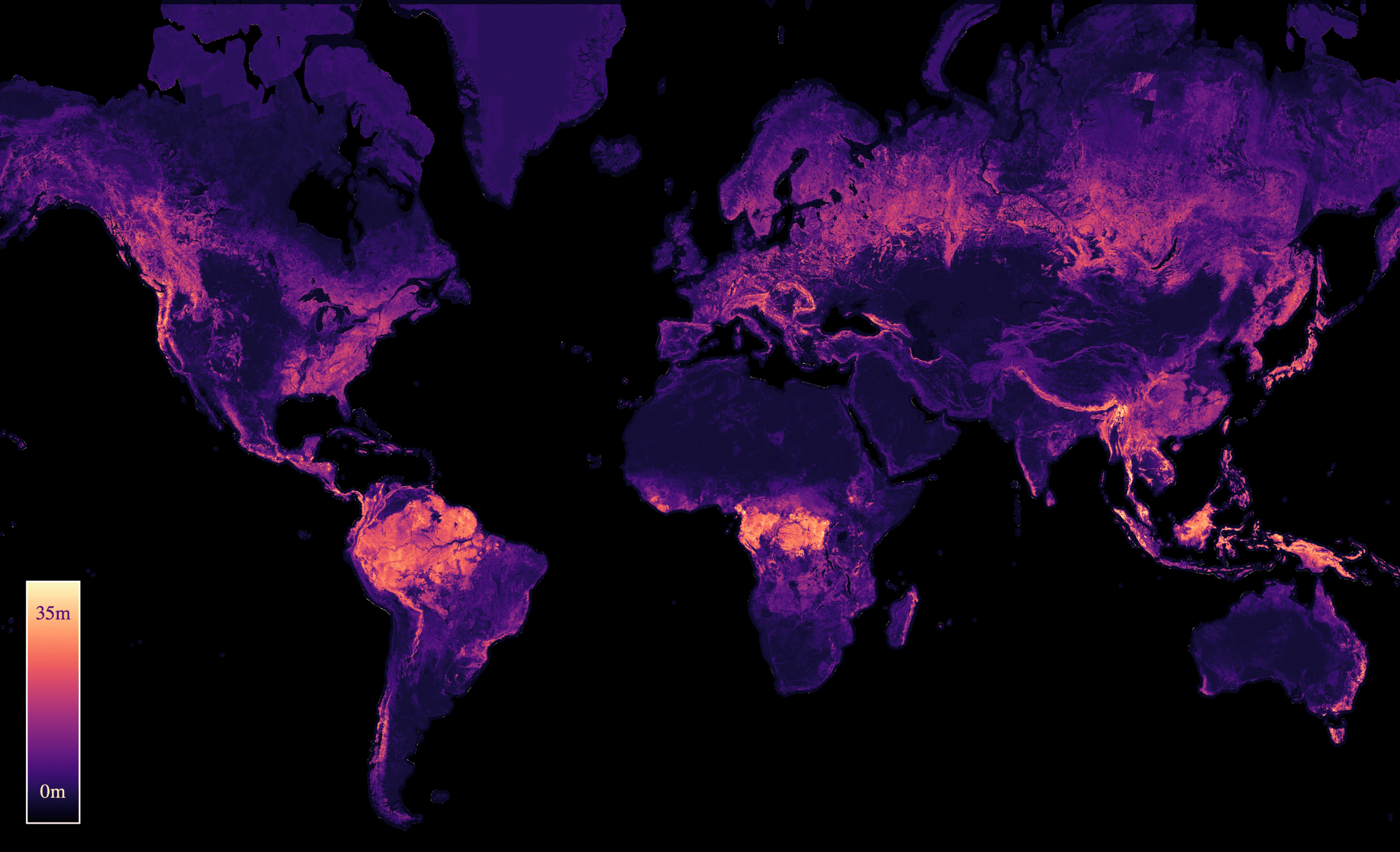

With the rise in global greenhouse gas emissions, accurate large-scale tree canopy height maps are essential for understanding forest structure, estimating above-ground biomass, and monitoring ecological disruptions. To this end, we present a novel approach to generate large-scale, high-resolution canopy height maps over time. Our model accurately predicts canopy height over multiple years given Sentinel-2 time series satellite data. Using GEDI LiDAR data as the ground truth for training the model, we present the first 10m resolution temporal canopy height map of the European continent for the period 2019-2022. As part of this product, we also offer a detailed canopy height map for 2020, providing more precise estimates than previous studies. Our pipeline and the resulting temporal height map are publicly available, enabling comprehensive large-scale monitoring of forests and, hence, facilitating future research and ecological analyses. For an interactive viewer, see this https URL.

@inproceedings{pauls2025capturing, title = {Capturing Temporal Dynamics in Large-Scale Canopy Tree Height Estimation}, author = {Pauls, Jan and Zimmer, Max and Turan, Berkant and Saatchi, Sassan and Ciais, Philippe and Pokutta, Sebastian and Gieseke, Fabian}, booktitle = {42nd International Conference on Machine Learning (ICML)}, year = {2025}, custom = {Earth Engine|https://europetreemap.projects.earthengine.app/view/temporalcanopyheight}, tags = {ml, application}, projects = {ai4forest} } - P. N. Bernardino, W. D. Keersmaecker, S. Horion, R. V. D. Kerchove, S. Lhermitte, R. Fensholt, S. Oehmcke, F. Gieseke, K. V. Meerbeek, C. Abel, J. Verbesselt, and B. SomersJOURNALNature Climate Change 2025

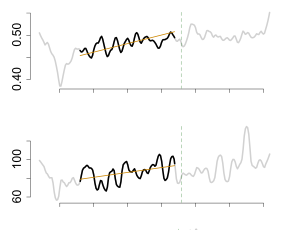

Climate change and human-induced land degradation threaten dryland ecosystems, vital to one-third of the global population and pivotal to inter-annual global carbon fluxes. Early warning systems are essential for guiding conservation, climate change mitigation and alleviating food insecurity in drylands. However, contemporary methods fail to provide large-scale early warnings effectively. Here we show that a machine learning-based approach can predict the probability of abrupt shifts in Sudano–Sahelian dryland vegetation functioning (75.1% accuracy; 76.6% precision) particularly where measures of resilience (temporal autocorrelation) are supplemented with proxies for vegetation and rainfall dynamics and other environmental factors. Regional-scale predictions for 2025 highlight a belt in the south of the study region with high probabilities of future shifts, largely linked to long-term rainfall trends. Our approach can provide valuable support for the conservation and sustainable use of dryland ecosystem services, particularly in the context of climate change projected drying trends.

@article{BernardinoKHKLFOGMAVS2025, author = {Bernardino, Paulo Negri and Keersmaecker, Wanda De and Horion, Stéphanie and Kerchove, Ruben Van De and Lhermitte, Stef and Fensholt, Rasmus and Oehmcke, Stefan and Gieseke, Fabian and Meerbeek, Koenraad Van and Abel, Christin and Verbesselt, Jan and Somers, Ben}, title = {Predictability of abrupt shifts in dryland ecosystem functioning}, journal = {Nature Climate Change}, year = {2025}, volume = {15}, pages = {86--91}, doi = {10.1038/s41558-024-02201-0}, tags = {application,rs}, } - M. Brandt, J. Chave, S. Li, R. Fensholt, P. Ciais, J. Wigneron, F. Gieseke, S. Saatchi, C. J. Tucker, and C. IgelJOURNALNature Reviews Electrical Engineering 2025

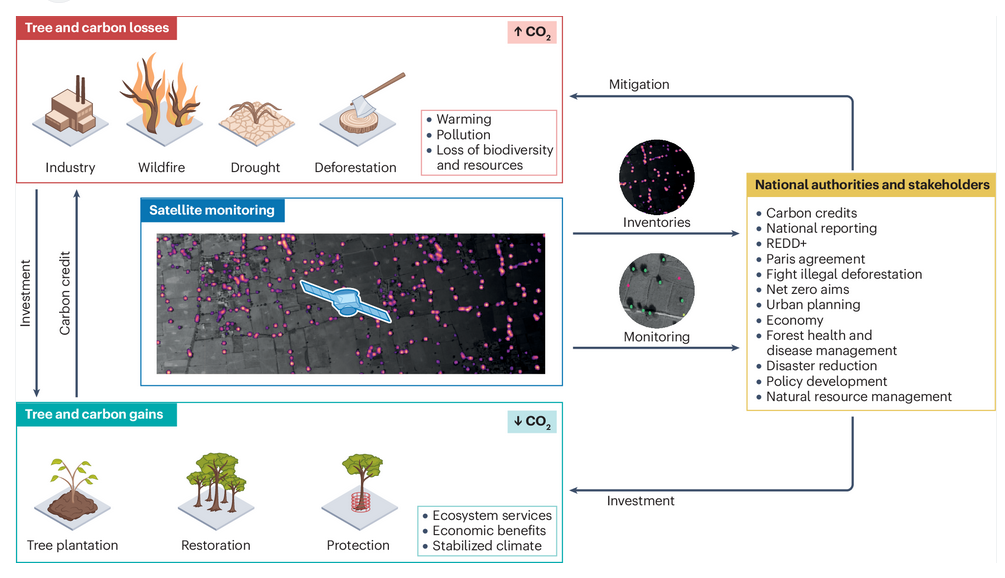

Trees contribute to carbon dioxide absorption through biomass, regulate the climate, support biodiversity, enhance soil, air and water quality, and offer economic and health benefits. Traditionally, tree monitoring on continental and global scales has focused on forest cover, whereas assessing biomass and species diversity, as well as trees outside closed-canopy forests, has been challenging. A new generation of commercial and public satellites and sensors provide high-resolution spatial and temporal optical data that can be used to identify trees as objects. Technologies from the field of artificial intelligence, such as convolutional neural networks and vision transformers, can go beyond detecting these objects as two-dimensional representations, and support characterization of the three-dimensional structure of objects, such as canopy height and wood volume, via contextual learning from two-dimensional images. These advancements enable reliable characterization of trees, their structure, biomass and diversity both inside and outside forests. Furthermore, self-supervision and foundation models facilitate large-scale applications without requiring extensive amounts of labels. Here, we summarize these advances, highlighting their application towards consistent tree monitoring systems that can assess carbon stocks, attribute losses and gains to underlying drivers and, ultimately, contribute to climate change mitigation.

@article{BrandtCLFCWGSTI2025HighResolution, author = {Brandt, Martin and Chave, Jerome and Li, Sizhuo and Fensholt, Rasmus and Ciais, Philippe and Wigneron, Jean-Pierre and Gieseke, Fabian and Saatchi, Sassan and Tucker, C. J. and Igel, Christian}, title = {High-resolution sensors and deep learning models for tree resource monitoring}, journal = {Nature Reviews Electrical Engineering}, year = {2025}, volume = {2}, pages = {13--26}, doi = {10.1038/s44287-024-00116-8}, tags = {application,rs}, } - J. Pauls, M. Zimmer, U. M. Kelly, M. Schwartz, S. Saatchi, P. Ciais, S. Pokutta, M. Brandt, and F. GiesekeICML24 41st International Conference on Machine Learning (ICML) 2024

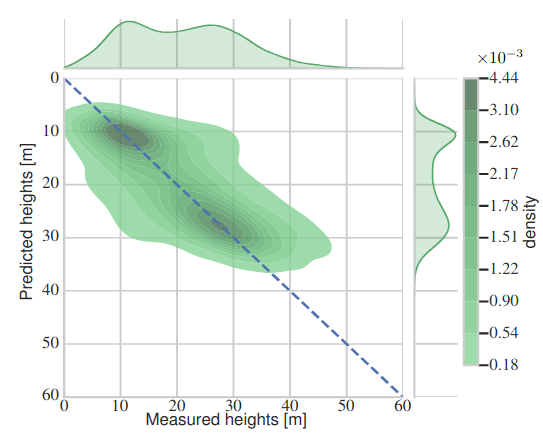

We propose a framework for global-scale canopy height estimation based on satellite data. Our model leverages advanced data preprocessing techniques, resorts to a novel loss function designed to counter geolocation inaccuracies inherent in the ground-truth height measurements, and employs data from the Shuttle Radar Topography Mission to effectively filter out erroneous labels in mountainous regions, enhancing the reliability of our predictions in those areas. A comparison between predictions and ground-truth labels yields an MAE / RMSE of 2.43 / 4.73 (meters) overall and 4.45 / 6.72 (meters) for trees taller than five meters, which depicts a substantial improvement compared to existing global-scale maps. The resulting height map as well as the underlying framework will facilitate and enhance ecological analyses at a global scale, including, but not limited to, large-scale forest and biomass monitoring.

@inproceedings{pauls2024estimating, title = {Estimating Canopy Height at Scale}, author = {Pauls, Jan and Zimmer, Max and Kelly, Una M. and Schwartz, Martin and Saatchi, Sassan and Ciais, Philippe and Pokutta, Sebastian and Brandt, Martin and Gieseke, Fabian}, booktitle = {41st International Conference on Machine Learning (ICML)}, year = {2024}, custom = {Earth Engine|https://worldwidemap.projects.earthengine.app/view/canopy-height-2020}, tags = {ml,de,application}, projects = {ai4forest} } - C. Lülf, D. M. Lima Martins, S. M. A. Vaz, Y. Zhou, and F. GiesekeSIGIR24 Proceedings of the 47th International ACM SIGIR Conference on Research and Development in Information Retrieval (Demo Track) 2024

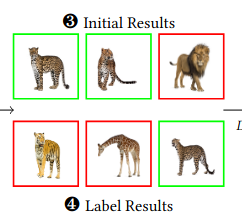

The advent of text-image models, most notably CLIP, has signifi- cantly transformed the landscape of information retrieval. These models enable the fusion of various modalities, such as text and images. One significant outcome of CLIP is its capability to allow users to search for images using text as a query, as well as vice versa. This is achieved via a joint embedding of images and text data that can, for instance, be used to search for similar items. De- spite efficient query processing techniques such as approximate nearest neighbor search, the results may lack precision and com- pleteness. We introduce CLIP-Branches, a novel text-image search engine built upon the CLIP architecture. Our approach enhances traditional text-image search engines by incorporating an interac- tive fine-tuning phase, which allows the user to further concretize the search query by iteratively defining positive and negative exam- ples. Our framework involves training a classification model given the additional user feedback and essentially outputs all positively classified instances of the entire data catalog. By building upon re- cent techniques, this inference phase, however, is not implemented by scanning the entire data catalog, but by employing efficient index structures pre-built for the data. Our results show that the fine-tuned results can improve the initial search outputs in terms of relevance and accuracy while maintaining swift response times

@inproceedings{LuelfLMVZG2024CLIPBranches, author = {Lülf, Christian and {Lima Martins}, Denis Mayr and Vaz, Salles Marcos Antonio and Zhou, Yongluan and Gieseke, Fabian}, title = {CLIP-Branches: Interactive Fine-Tuning for Text-Image Retrieval}, booktitle = {Proceedings of the 47th International ACM SIGIR Conference on Research and Development in Information Retrieval (Demo Track)}, year = {2024}, address = {Washington, D.C.}, tags = {ml,de}, } - S. Oehmcke, L. Li, K. Trepekli, J. C. Revenga, T. Nord-Larsen, F. Gieseke, and C. IgelJOURNALRemote Sensing of Environment 2024

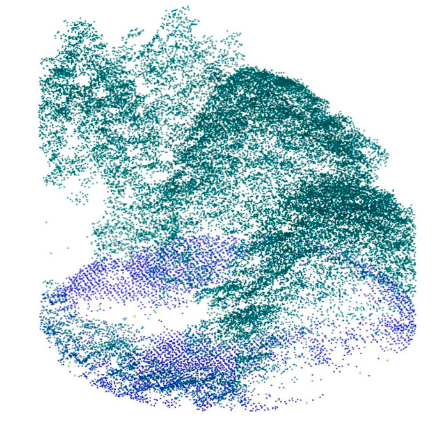

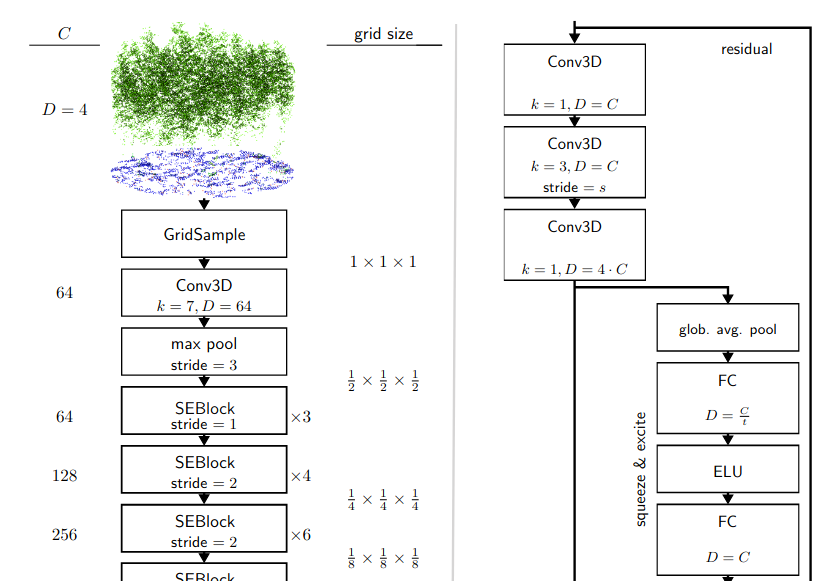

Quantifying forest biomass stocks and their dynamics is important for implementing effective climate change mitigation measures by aiding local forest management, studying processes driving af-, re-, and deforestation, and improving the accuracy of carbon accounting. Owing to the 3-dimensional nature of forest structure, remote sensing using airborne LiDAR can be used to perform these measurements of vegetation structure at large scale. Harnessing the full dimensionality of the data, we present deep learning systems predicting wood volume and above ground biomass (AGB) directly from the full LiDAR point cloud and compare results to state-of-the-art approaches operating on basic statistics of the point clouds. For this purpose, we devise different neural network architectures for point cloud regression and evaluate them on remote sensing data of areas for which AGB estimates have been obtained from field measurements in the Danish national forest inventory. Our adaptation of Minkowski convolutional neural networks for regression give the best results. The deep neural networks produce significantly more accurate wood volume, AGB, and carbon stock estimates compared to state-of-the-art approaches. In contrast to other methods, the proposed deep learning approach does not require a digital terrain model and is robust to artifacts along the boundaries of the evaluated areas, which we demonstrate for the case where trees protrude into the area from the outside. We expect this finding to have a strong impact on LiDAR-based analyses of biomass dynamics.

@article{OehmckeLTRNLGI2024DeepPointCloud, author = {Oehmcke, Stefan and Li, Lei and Trepekli, Katerina and Revenga, Jaime C. and Nord-Larsen, Thomas and Gieseke, Fabian and Igel, Christian}, title = {Deep point cloud regression for above-ground forest biomass estimation from airborne LiDAR}, journal = {Remote Sensing of Environment}, year = {2024}, volume = {302}, doi = {10.1016/j.rse.2023.113968}, tags = {rs,ml,application} } - D. L. M. Martins, C. Lülf, and F. GiesekeESANN23 31st European Symposium on Artificial Neural Networks, Computational Intelligence and Machine Learning 2023

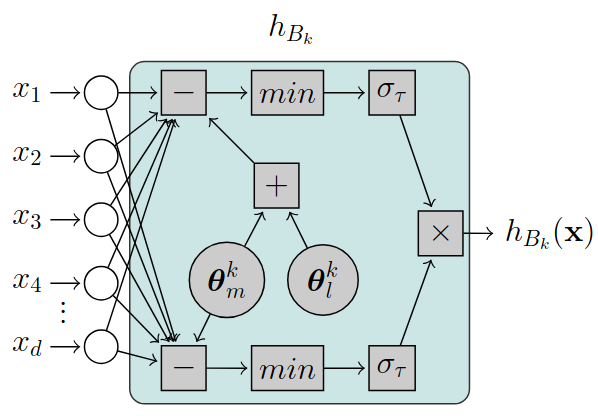

Hyperbox-based classification has been seen as a promising technique in which decisions on the data are represented as a series of orthogonal, multidimensional boxes (i.e., hyperboxes) that are often interpretable and human-readable. However, existing methods are no longer capable of efficiently handling the increasing volume of data many application domains face nowadays. We address this gap by proposing a novel, fully differentiable framework for hyperbox-based classification via neural networks. In contrast to previous work, our hyperbox models can be efficiently trained in an end-to-end fashion, which leads to significantly reduced training times and superior classification results.

@inproceedings{LimaDCF2023EndToEndNeural, author = {Martins, Denis Lima Mayr and Lülf, Christian and Gieseke, Fabian}, title = {End-to-End Neural Network Training for Hyperbox-Based Classification}, booktitle = {31st European Symposium on Artificial Neural Networks, Computational Intelligence and Machine Learning}, year = {2023}, address = {Brügge}, tags = {ml} } - C. Lülf, D. M. Lima Martins, S. M. A. Vaz, Y. Zhou, and F. GiesekeVLDB23 Proceedings of the VLDB Endowment 2023

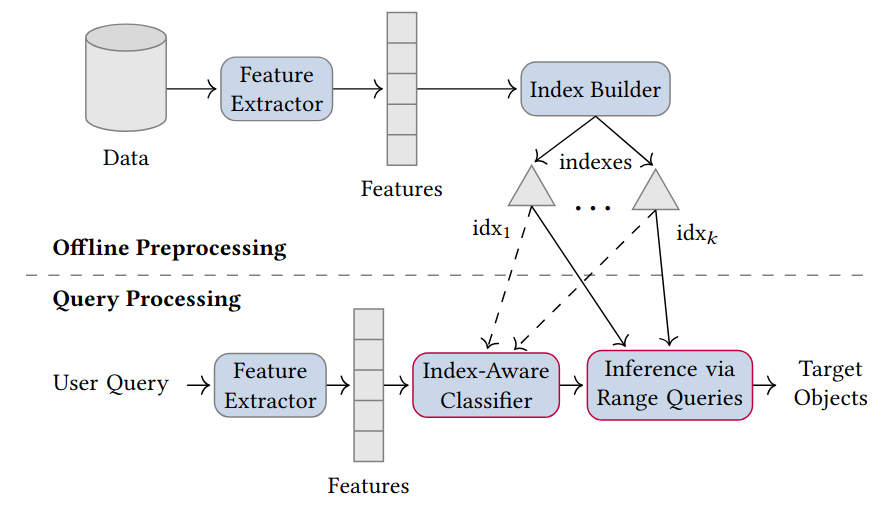

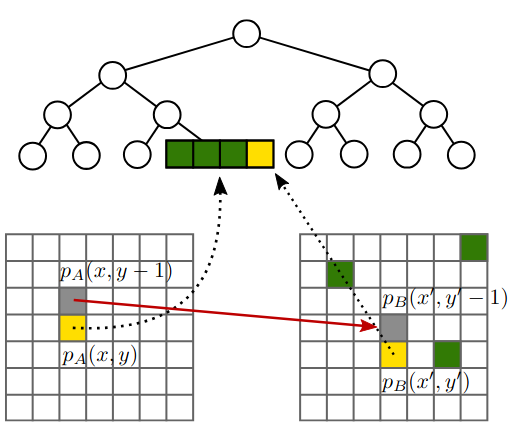

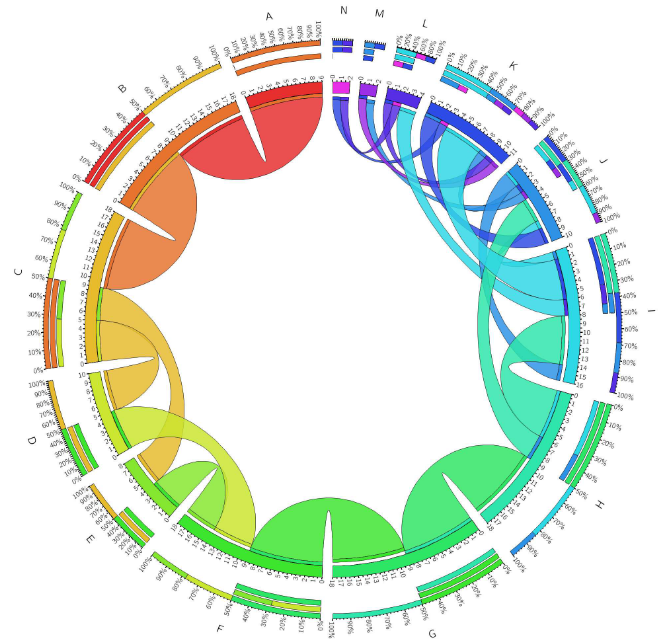

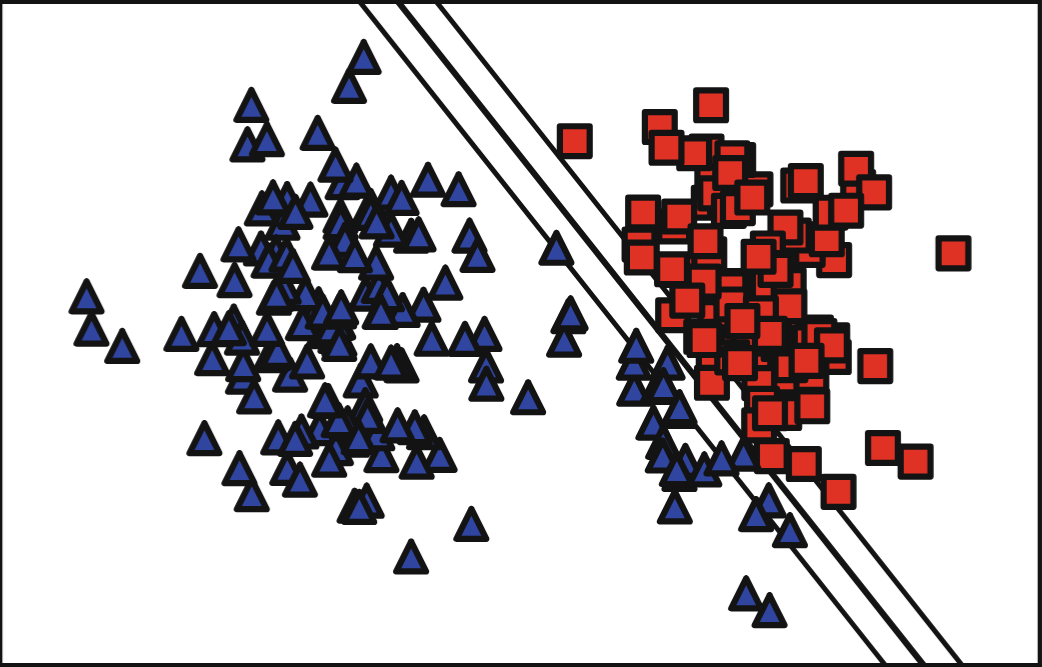

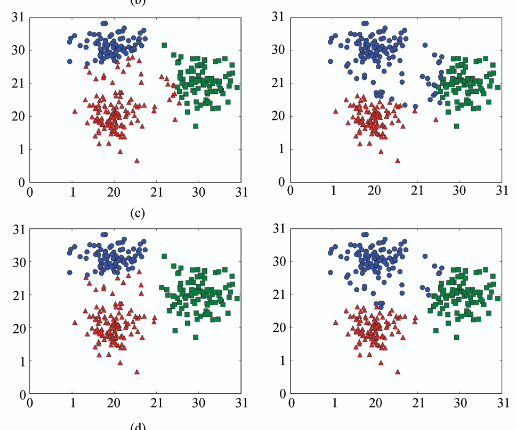

The vast amounts of data collected in various domains pose great challenges to modern data exploration and analysis. To find “inter- esting” objects in large databases, users typically define a query using positive and negative example objects and train a classifi- cation model to identify the objects of interest in the entire data catalog. However, this approach requires a scan of all the data to apply the classification model to each instance in the data catalog, making this method prohibitively expensive to be employed in large-scale databases serving many users and queries interactively. In this work, we propose a novel framework for such search-by- classification scenarios that allows users to interactively search for target objects by specifying queries through a small set of positive and negative examples. Unlike previous approaches, our frame- work can rapidly answer such queries at low cost without scanning the entire database. Our framework is based on an index-aware construction scheme for decision trees and random forests that transforms the inference phase of these classification models into a set of range queries, which in turn can be efficiently executed by leveraging multidimensional indexing structures. Our experiments show that queries over large data catalogs with hundreds of millions of objects can be processed in a few seconds using a single server, compared to hours needed by classical scanning-based approaches.

@inproceedings{LuelfLMVZG2023FastSearchByClassification, author = {Lülf, Christian and {Lima Martins}, Denis Mayr and Vaz, Salles Marcos Antonio and Zhou, Yongluan and Gieseke, Fabian}, title = {Fast Search-By-Classification for Large-Scale Databases Using Index-Aware Decision Trees and Random Forests}, booktitle = {Proceedings of the VLDB Endowment}, pages = {2845--2857}, volume = {16}, editor = {VLDB, Endowment}, year = {2023}, publisher = {ACM Press}, address = {Vancouver}, issn = {2150-8097}, doi = {10.14778/3611479.3611492}, tags = {ml,de} } - RapidEarth: A Search Engine for Large-Scale Geospatial Imagery Best Demo AwardC. Lülf, D. M. Lima Martins, S. M. A. Vaz, Y. Zhou, and F. GiesekeSIGSPATIAL23 Proceedings of the 31st International Conference on Advances in Geographic Information Systems, SIGSPATIAL, Demo Paper, 2023 (Best Demo Award) 2023

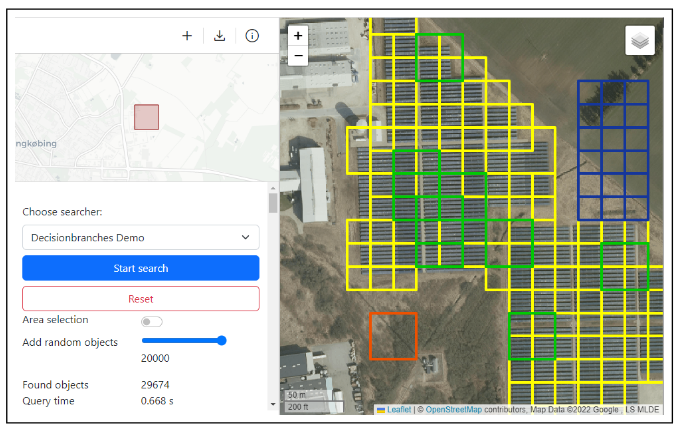

Data exploration and analysis in various domains often necessitate the search for specific objects in massive databases. A common search strategy, often known as search-by-classification, resorts to training machine learning models on small sets of positive and negative samples and to performing inference on the entire database to discover additional objects of interest. While such an approach often yields very good results in terms of classification performance, the entire database usually needs to be scanned, a process that can easily take several hours even for medium-sized data catalogs. In this work, we present RapidEarth, a geospatial search-by-classification engine that allows analysts to rapidly search for interesting objects in very large data collections of satellite imagery in a matter of seconds, without the need to scan the entire data catalog. RapidEarth embodies a co-design of multidimensional indexing structures and decision branches, a recently proposed variant of classical decision trees. These decision branches allow RapidEarth to transform the inference phase into a set of range queries, which can be efficiently processed by leveraging the aforementioned multidimensional indexing structures. The main contribution of this work is a geospatial search engine that implements these technical findings.

- S. Li, M. Brandt, R. Fensholt, A. Kariryaa, C. Igel, F. Gieseke, T. Nord-Larsen, S. Oehmcke, A. H. Carlsen, S. Junttila, X. Tong, A. d’Aspremont, and P. CiaisJOURNALPNAS Nexus 2023

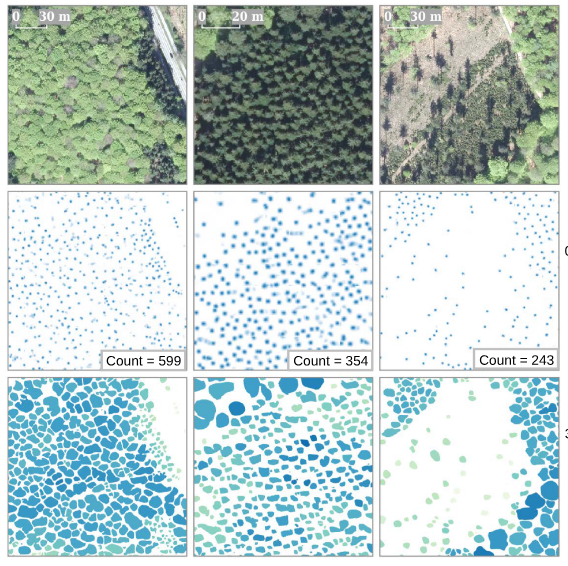

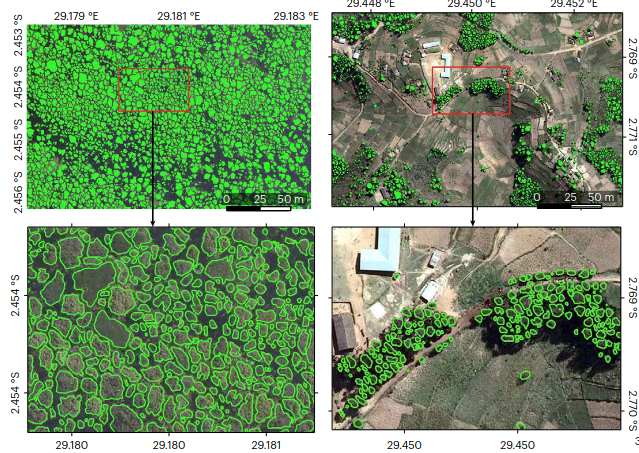

Sustainable tree resource management is the key to mitigating climate warming, fostering a green economy, and protecting valuable habitats. Detailed knowledge about tree resources is a prerequisite for such management but is conventionally based on plot-scale data, which often neglects trees outside forests. Here, we present a deep learning-based framework that provides location, crown area, and height for individual overstory trees from aerial images at country scale. We apply the framework on data covering Denmark and show that large trees (stem diameter >10 cm) can be identified with a low bias (12.5%) and that trees outside forests contribute to 30% of the total tree cover, which is typically unrecognized in national inventories. The bias is high (46.6%) when our results are evaluated against all trees taller than 1.3 m, which involve undetectable small or understory trees. Furthermore, we demonstrate that only marginal effort is needed to transfer our framework to data from Finland, despite markedly dissimilar data sources. Our work lays the foundation for digitalized national databases, where large trees are spatially traceable and manageable.

@article{LiSMRACFTSASXAP2023DeepLearning, author = {Li, Sizhuo and Brandt, Martin and Fensholt, Rasmus and Kariryaa, Ankit and Igel, Christian and Gieseke, Fabian and Nord-Larsen, Thomas and Oehmcke, Stefan and Carlsen, Ask Holm and Junttila, Samuli and Tong, Xiaoye and d’Aspremont, Alexandre and Ciais, Philippe}, title = {Deep learning enables image-based tree counting, crown segmentation, and height prediction at national scale}, journal = {PNAS Nexus}, year = {2023}, volume = {2}, number = {4}, doi = {10.1093/pnasnexus/pgad076}, tags = {rs,application,ml} } - F. Reiner, M. Brandt, X. Tong, D. Skole, A. Kariryaa, P. Ciais, A. Davies, P. Hiernaux, J. Chave, M. Mugabowindekwe, C. Igel, S. Oehmcke, F. Gieseke, S. Li, S. Liu, S. S. Saatchi, P. Boucher, J. Singh, S. Taugourdeau, M. Dendoncker, X. Song, O. Mertz, C. Tucker, and R. FensholtJOURNALNature Communications 2023

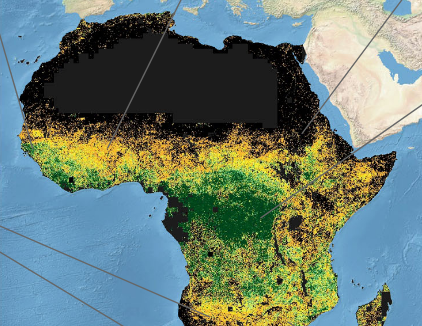

The consistent monitoring of trees both inside and outside of forests is key to sustainable land management. Current monitoring systems either ignore trees outside forests or are too expensive to be applied consistently across countries on a repeated basis. Here we use the PlanetScope nanosatellite constellation, which delivers global very high-resolution daily imagery, to map both forest and non-forest tree cover for continental Africa using images from a single year. Our prototype map of 2019 (RMSE = 9.57%, bias = −6.9%). demonstrates that a precise assessment of all tree-based ecosystems is possible at continental scale, and reveals that 29% of tree cover is found outside areas previously classified as tree cover in state-of-the-art maps, such as in croplands and grassland. Such accurate mapping of tree cover down to the level of individual trees and consistent among countries has the potential to redefine land use impacts in non-forest landscapes, move beyond the need for forest definitions, and build the basis for natural climate solutions and tree-related studies.

@article{ReinerBTSKCDHCMIOGLLSBSTDSMTF2023MoreThanOne, author = {Reiner, Florian and Brandt, Martin and Tong, Xiaoye and Skole, David and Kariryaa, Ankit and Ciais, Philippe and Davies, Andrew and Hiernaux, Pierre and Chave, Jerome and Mugabowindekwe, Maurice and Igel, Christian and Oehmcke, Stefan and Gieseke, Fabian and Li, Sizhuo and Liu, Siyu and Saatchi, Sassan S and Boucher, Peter and Singh, Jenia and Taugourdeau, Simon and Dendoncker, Morgane and Song, Xiao-Peng and Mertz, Ole and Tucker, Compton and Fensholt, Rasmus}, title = {More than one quarter of Africa's tree cover is found outside areas previously classified as forest}, journal = {Nature Communications}, year = {2023}, tags = {rs,application,ml} } - S. Oehmcke, and F. GiesekeSDM22 Proceedings of the 2022 SIAM International Conference on Data Mining (SDM) 2022

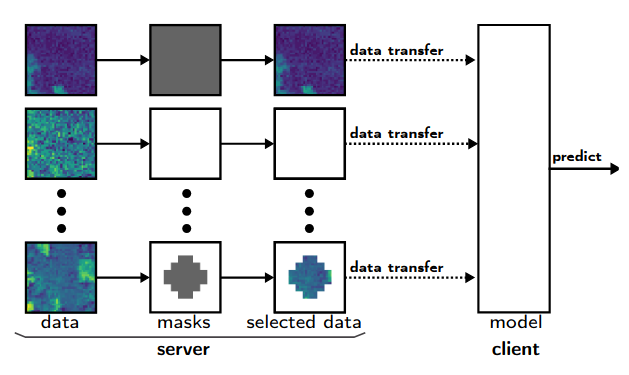

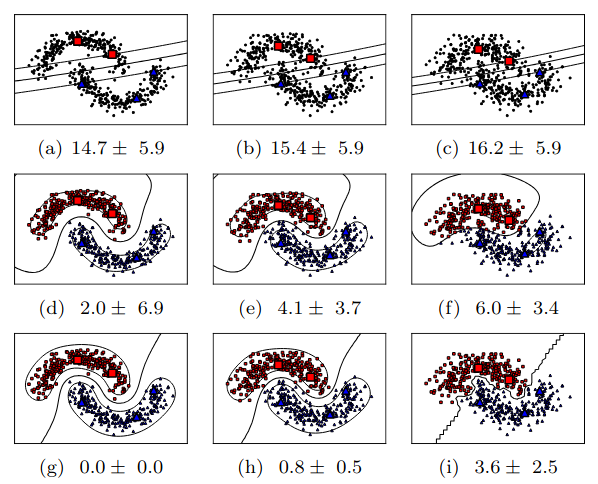

Data are often accommodated on centralized storage servers. This is the case, for instance, in remote sensing and astronomy, where projects produce several petabytes of data every year. While machine learning models are often trained on relatively small subsets of the data, the inference phase typically requires transferring significant amounts of data between the servers and the clients. In many cases, the bandwidth available per user is limited, which then renders the data transfer to be one of the major bottlenecks. In this work, we propose a framework that automatically selects the relevant parts of the input data for a given neural network. The model as well as the associated selection masks are trained simultaneously such that a good model performance is achieved while only a minimal amount of data is selected. During the inference phase, only those parts of the data have to be transferred between the server and the client. We propose both instance-independent and instance-dependent selection masks. The former ones are the same for all instances to be transferred, whereas the latter ones allow for variable transfer sizes per instance. Our experiments show that it is often possible to significantly reduce the amount of data needed to be transferred without affecting the model quality much.

@inproceedings{OehmckeG2022InputSelection, author = {Oehmcke, Stefan and Gieseke, Fabian}, title = {Input Selection for Bandwidth-Limited Neural Network Inference}, booktitle = {Proceedings of the 2022 SIAM International Conference on Data Mining (SDM)}, pages = {280--288}, editor = {Banerjee, Arindam and Zhou, Zhi-Hua and Papalexakis, Evangelos E. and Riondato, Matteo}, year = {2022}, publisher = {SIAM Publications}, address = {USA}, doi = {10.1137/1.9781611977172.32}, tags = {ml,de}, } - S. Oehmcke, L. Li, J. Revenga, T. Nord-Larsen, K. Trepekli, F. Gieseke, and C. IgelSIGSPATIAL22 30th ACM SIGSPATIAL International Conference on Advances in Geographic Information Systems (ACM SIGSPATIAL 2022) 2022

Quantification of forest biomass stocks and their dynamics is important for implementing effective climate change mitigation measures. The knowledge is needed, e.g., for local forest management, studying the processes driving af-, re-, and deforestation, and can improve the accuracy of carbon-accounting. Remote sensing using airborne LiDAR can be used to perform these measurements of vegetation structure at large scale. We present deep learning systems for predicting wood volume, above-ground biomass (AGB), and subsequently above-ground carbon stocks directly from airborne LiDAR point clouds. We devise different neural network architectures for point cloud regression and evaluate them on remote sensing data of areas for which AGB estimates have been obtained from field measurements in the Danish national forest inventory. Our adaptation of Minkowski convolutional neural networks for regression gave the best results. The deep neural networks produced significantly more accurate wood volume, AGB, and carbon stock estimates compared to state-of-the-art approaches operating on basic statistics of the point clouds. In contrast to other methods, the proposed deep learning approach does not require a digital terrain model. We expect this finding to have a strong impact on LiDAR-based analyses of biomass dynamics.

@inproceedings{OehmckeLRNLTGI2022DeepLearning, author = {Oehmcke, Stefan and Li, Lei and Revenga, Jaime and Nord-Larsen, Thomas and Trepekli, Katerina and Gieseke, Fabian and Igel, Christian}, title = {Deep Learning Based 3D Point Cloud Regression for Estimating Forest Biomass}, booktitle = {30th ACM SIGSPATIAL International Conference on Advances in Geographic Information Systems (ACM SIGSPATIAL 2022)}, pages = {1--4}, editor = {Renz, Matthias and Sarwat, Mohamed}, year = {2022}, publisher = {ACM Press}, address = {New York, NY, USA}, doi = {10.1145/3557915.3561471}, tags = {ml,application,rs} } - R. N. Masolele, S. V. De, D. Marcos, J. Verbesselt, F. Gieseke, K. A. Mulatu, Y. Moges, H. Sebrala, C. Martius, and M. HeroldJOURNALGIScience and Remote Sensing 2022

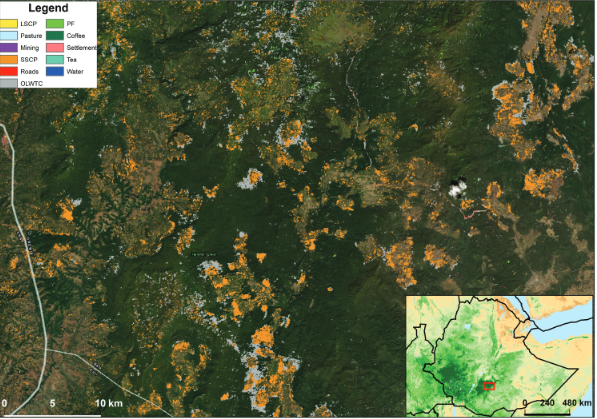

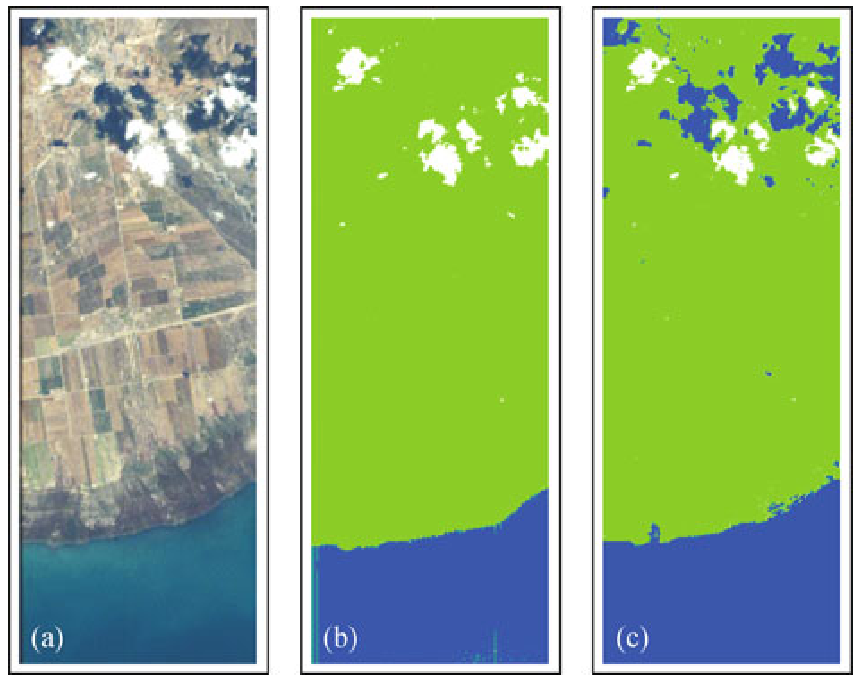

National-scale assessments of post-deforestation land-use are crucial for decreasing deforestation and forest degradation-related emissions. In this research, we assess the potential of different satellite data modalities (single-date, multi-date, multi-resolution, and an ensemble of multi-sensor images) for classifying land-use following deforestation in Ethiopia using the U-Net deep neural network architecture enhanced with attention. We performed the analysis on satellite image data retrieved across Ethiopia from freely available Landsat-8, Sentinel-2 and Planet-NICFI satellite data. The experiments aimed at an analysis of (a) single-date images from individual sensors to account for the differences in spatial resolution between image sensors in detecting land-uses, (b) ensembles of multiple images from different sensors (Planet-NICFI/Sentinel-2/Landsat-8) with different spatial resolutions, (c) the use of multi-date data to account for the contribution of temporal information in detecting land-uses, and, finally, (d) the identification of regional differences in terms of land-use following deforestation in Ethiopia. We hypothesize that choosing the right satellite imagery (sensor) type is crucial for the task. Based on a comprehensive visually interpreted reference dataset of 11 types of post-deforestation land-uses, we find that either detailed spatial patterns (single-date Planet-NICFI) or detailed temporal patterns (multi-date Sentinel-2, Landsat-8) are required for identifying land-use following deforestation, while medium-resolution single-date imagery is not sufficient to achieve high classification accuracy. We also find that adding soft-attention to the standard U-Net improved the classification accuracy, especially for small-scale land-uses. The models and products presented in this work can be used as a powerful data resource for governmental and forest monitoring agencies to design and monitor deforestation mitigation measures and data-driven land-use policy.

@article{MasoleleDMVGMMSMH2022UsingHighResolution, author = {Masolele, Robert N. and De, Sy Veronique and Marcos, Diego and Verbesselt, Jan and Gieseke, Fabian and Mulatu, Kalkidan Ayele and Moges, Yitebitu and Sebrala, Heiru and Martius, Christopher and Herold, Martin}, title = {Using high-resolution imagery and deep learning to classify land-use following deforestation: a case study in Ethiopia}, journal = {GIScience and Remote Sensing}, year = {2022}, volume = {59}, number = {1}, pages = {1446--1472}, doi = {10.1080/15481603.2022.2115619}, tags = {application,rs}, } - M. Mugabowindekwe, M. Brandt, J. Chave, F. Reiner, D. Skole, A. Kariryaa, C. Igel, P. Hiernaux, P. Ciais, O. Mertz, X. Tong, S. Li, G. Rwanyiziri, T. Dushimiyimana, A. Ndoli, U. Valens, J. Lillesø, F. Gieseke, C. Tucker, S. S. Saatchi, and R. FensholtJOURNALNature Climate Change 2022

Trees sustain livelihoods and mitigate climate change but a predominance of trees outside forests and limited resources make it difficult for many tropical countries to conduct automated nation-wide inventories. Here, we propose an approach to map the carbon stock of each individual overstory tree at the national scale of Rwanda using aerial imagery from 2008 and deep learning. We show that 72% of the mapped trees are located in farmlands and savannas and 17% in plantations, accounting for 48.6% of the national aboveground carbon stocks. Natural forests cover 11% of the total tree count and 51.4% of the national carbon stocks, with an overall carbon stock uncertainty of 16.9%. The mapping of all trees allows partitioning to any landscapes classification and is urgently needed for effective planning and monitoring of restoration activities as well as for optimization of carbon sequestration, biodiversity and economic benefits of trees.

@article{MugabowindekweBCRSKIHCMTLRDNVLGTSF2022NationWide, author = {Mugabowindekwe, Maurice and Brandt, Martin and Chave, Jerome and Reiner, Florian and Skole, David and Kariryaa, Ankit and Igel, Christian and Hiernaux, Pierre and Ciais, Philippe and Mertz, Ole and Tong, Xiaoye and Li, Sizhuo and Rwanyiziri, Gaspard and Dushimiyimana, Thaulin and Ndoli, Alain and Valens, Uwizeyimana and Lillesø, Jens-Peter and Gieseke, Fabian and Tucker, Compton and Saatchi, Sassan S and Fensholt, Rasmus}, title = {Nation-wide mapping of tree-level aboveground carbon stocks in Rwanda}, journal = {Nature Climate Change}, year = {2022}, volume = {13}, doi = {10.1038/s41558-022-01544-w}, tags = {application,rs}, } - J. C. Revenga, K. Trepekli, S. Oehmcke, R. Jensen, L. Li, C. Igel, F. Gieseke, and T. FriborgJOURNALRemote Sensing (Remote Sens.) 2022

Current endeavors to enhance the accuracy of in situ above-ground biomass (AGB) prediction for croplands rely on close-range monitoring surveys that use unstaffed aerial vehicles (UAVs) and mounted sensors. In precision agriculture, light detection and ranging (LiDAR) technologies are currently used to monitor crop growth, plant phenotyping, and biomass dynamics at the ecosystem scale. In this study, we utilized a UAV–LiDAR sensor to monitor two crop fields and a set of machine learning (ML) methods to predict real-time AGB over two consecutive years in the region of Mid-Jutland, Denmark. During each crop growing period, UAV surveys were conducted in parallel with AGB destructive sampling every 7–15 days, the AGB samples from which were used as the ground truth data. We evaluated the ability of the ML models to estimate the real-time values of AGB at a sub-meter resolution (0.17–0.52 m2). An extremely randomized trees (ERT) regressor was selected for the regression analysis, based on its predictive performance for the first year’s growing season. The model was retrained using previously identified hyperparameters to predict the AGB of the crops in the second year. The ERT performed AGB estimation using height and reflectance metrics from LiDAR-derived point cloud data and achieved a prediction performance of 𝑅2 = 0.48 at a spatial resolution of 0.35 m2. The prediction performance could be improved significantly by aggregating adjacent predictions (𝑅2 = 0.71 and 𝑅2 = 0.93 at spatial resolutions of 1 m2 and 2 m2, respectively) as they ultimately converged to the reference biomass values because any individual errors averaged out. The AGB prediction results were examined as function of predictor type, training set size, sampling resolution, phenology, and canopy density. The results demonstrated that when combined with ML regression methods, the UAV–LiDAR method could be used to provide accurate real-time AGB prediction for crop fields at a high resolution, thereby providing a way to map their biochemical constituents.

@article{RevengaTOJLIGF2022AboveGround, author = {Revenga, Jaime C. and Trepekli, Katerina and Oehmcke, Stefan and Jensen, Rasmus and Li, Lei and Igel, Christian and Gieseke, Fabian and Friborg, Thomas}, title = {Above-Ground Biomass Prediction for Croplands at a Sub-Meter Resolution Using UAV–LiDAR and Machine Learning Methods}, journal = {Remote Sensing (Remote Sens.)}, year = {2022}, volume = {14}, number = {16}, pages = {3912}, doi = {10.3390/rs14163912}, tags = {application,rs} } - Y. Dai, F. Gieseke, S. Oehmcke, Y. Wu, and K. BarnardWACV21 Proceedings of the Workshop on Applications of Computer Vision (WACV) 2021

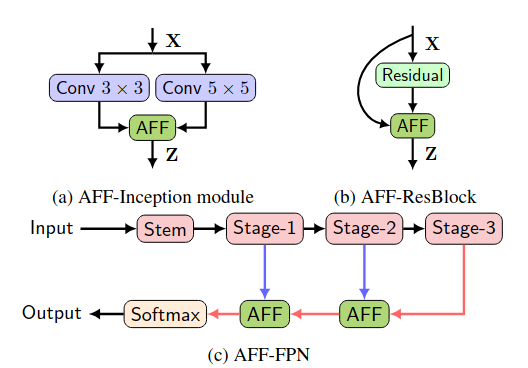

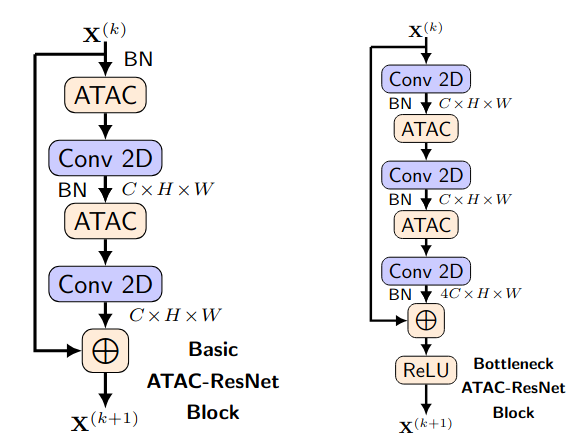

Feature fusion, the combination of features from different layers or branches, is an omnipresent part of modern network architectures. It is often implemented via simple operations, such as summation or concatenation, but this might not be the best choice. In this work, we propose a uniform and general scheme, namely attentional feature fusion, which is applicable for most common scenarios, including feature fusion induced by short and long skip connections as well as within Inception layers. To better fuse features of inconsistent semantics and scales, we propose a multi-scale channel attention module, which addresses issues that arise when fusing features given at different scales. We also demonstrate that the initial integration of feature maps can become a bottleneck and that this issue can be alleviated by adding another level of attention, which we refer to as iterative attentional feature fusion. With fewer layers or parameters, our models outperform state-of-the-art networks on both CIFAR-100 and ImageNet datasets, which suggests that more sophisticated attention mechanisms for feature fusion hold great potential to consistently yield better results compared to their direct counterparts. Our codes and trained models are available online.

@inproceedings{DaiGOWB2021Attentional, author = {Dai, Yimian and Gieseke, Fabian and Oehmcke, Stefan and Wu, Yiquan and Barnard, Kobus}, title = {Attentional Feature Fusion}, booktitle = {Proceedings of the Workshop on Applications of Computer Vision (WACV)}, pages = {3559--3568}, year = {2021}, publisher = {IEEE}, doi = {10.1109/WACV48630.2021.00360}, tags = {ml}, } - Dataset Sensitive Autotuning of Multi-versioned Code Based on Monotonic Properties — Autotuning in Futhark Best Paper AwardP. Munksgaard, S. L. Breddam, T. Henriksen, F. Gieseke, and C. E. OanceaTFP21 Proceedings of the 22nd International Symposium on Trends in Functional Programming (TFP) (Best Paper Award) 2021

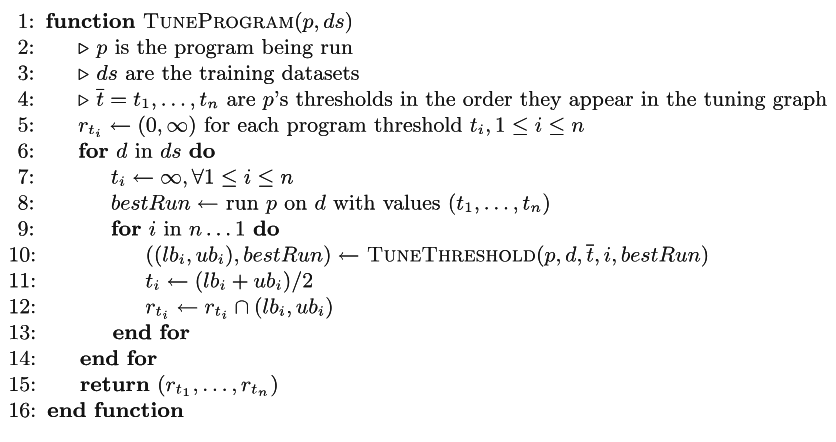

Functional languages allow rewrite-rule systems that aggressively generate a multitude of semantically-equivalent but differently-optimized code versions. In the context of GPGPU execution, this paper addresses the important question of how to compose these code versions into a single program that (near-)optimally discriminates them across different datasets. Rather than aiming at a general autotuning framework reliant on stochastic search, we argue that in some cases, a more effective solution can be obtained by customizing the tuning strategy for the compiler transformation producing the code versions. We present a simple and highly-composable strategy which requires that the (dynamic) program property used to discriminate between code versions conforms with a certain monotonicity assumption. Assuming the monotonicity assumption holds, our strategy guarantees that if an optimal solution exists it will be found. If an optimal solution doesn’t exist, our strategy produces human tractable and deterministic results that provide insights into what went wrong and how it can be fixed. We apply our tuning strategy to the incremental-flattening transformation supported by the publicly-available Futhark compiler and compare with a previous black-box tuning solution that uses the popular OpenTuner library. We demonstrate the feasibility of our solution on a set of standard datasets of real-world applications and public benchmark suites, such as Rodinia and FinPar. We show that our approach shortens the tuning time by a factor of 6× on average, and more importantly, in five out of eleven cases, it produces programs that are (as high as 10×) faster than the ones produced by the OpenTuner-based technique.

@inproceedings{MunksgaardBHGO2021DatasetSensitive, author = {Munksgaard, Philip and Breddam, Svend Lund and Henriksen, Troels and Gieseke, Fabian and Oancea, Cosmin Eugen}, title = {Dataset Sensitive Autotuning of Multi-versioned Code Based on Monotonic Properties --- Autotuning in Futhark}, booktitle = {Proceedings of the 22nd International Symposium on Trends in Functional Programming (TFP)}, pages = {3--23}, series = {Lecture Notes in Computer Science}, volume = {12834}, year = {2021}, publisher = {Springer}, address = {Virtual Event}, doi = {10.1007/978-3-030-83978-9}, tags = {de}, } - S. Oehmcke, T. Nyegaard-Signori, K. Grogan, and F. GiesekeIEEE BIGDATA 2021 2021 IEEE International Conference on Big Data (Big Data) 2021

Canopy height is a vital indicator to asses carbon uptake and productivity of forests. However, precise measurements, such as from airborne or spaceborne 3D laser scanning (LiDAR), are expensive and usually cover only small areas. In this work, we propose a novel deep learning model that can generate detailed maps of tree canopy heights. In contrast to previous approaches that use a single image as input, we process multi-temporal data via a an adaptation of the popular U-Net architecture that is based on the EfficientNet and 3D convolution operators. To that end, our model receives multi-spectral Landsat satellite imagery as input and can predict continuous height maps. As labeled data, we resort to spatially sparse LiDAR data from ICESat-2. Thus, with such a model, one can produce dense canopy height maps given only multi-spectral Landsat data. Our experimental evaluation shows that our our model outperforms existing and improved single-temporal models. To test generalizability, we created a non-overlapping dataset to evaluate our approach and further tested the model performance on out-of-distribution data. The results show that our model can successfully learn drastic changes in distribution.

@inproceedings{OehmckeNSGG2021Estimating, author = {Oehmcke, Stefan and Nyegaard-Signori, Thomas and Grogan, Kenneth and Gieseke, Fabian}, title = {Estimating Forest Canopy Height With Multi-Spectral and Multi-Temporal Imagery Using Deep Learning}, booktitle = {2021 {IEEE} International Conference on Big Data (Big Data)}, pages = {4915--4924}, editor = {Chen, Yixin and Ludwig, Heiko and Tu, Yicheng and Fayyad, Usama M. and Zhu, Xingquan and Hu, Xiaohua and Byna, Suren and Liu, Xiong and Zhang, Jianping and Pan, Shirui and Papalexakis, Vagelis and Wang, Jianwu and Cuzzocrea, Alfredo and Ordonez, Carlos}, year = {2021}, publisher = {Wiley-IEEE Press}, address = {Orlando, US}, doi = {10.1109/BigData52589.2021.9672018}, tags = {application,rs}, } - R. N. Masolele, V. De Sy, M. Herold, D. Marcos, J. Verbesselt, F. Gieseke, A. G. Mullissa, and C. MartiusJOURNALRemote Sensing of Environment 2021

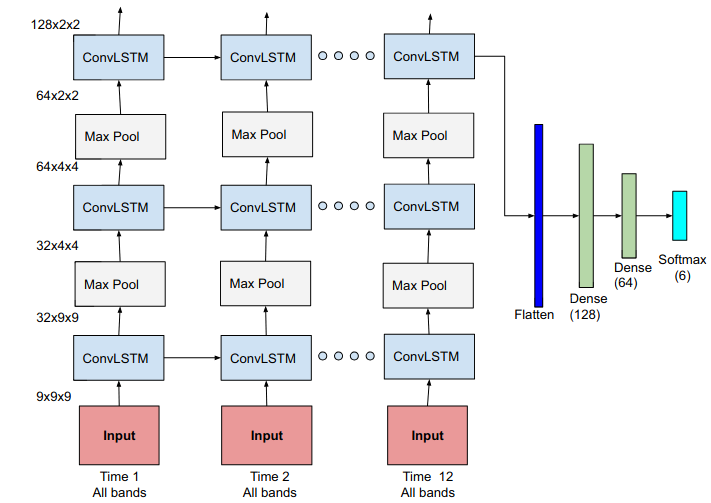

Assessing land-use following deforestation is vital for reducing emissions from deforestation and forest degradation. In this paper, for the first time, we assess the potential of spatial, temporal and spatio-temporal deep learning methods for large-scale classification of land-use following tropical deforestation using dense satellite time series over six years on the pan-tropical scale (incl. Latin America, Africa, and Asia). Based on an extensive reference database of six forest to land-use conversion types, we find that the spatio-temporal models achieved a substantially higher F1-score accuracies than models that account only for spatial or temporal patterns. Although all models performed better when the scope of the problem was limited to a single continent, the spatial models were more competitive than the temporal ones in this setting. These results suggest that the spatial patterns of land-use within a continent share more commonalities than the temporal patterns and the spatial patterns across continents. This work explores the feasibility of extending and complementing previous efforts for characterizing follow-up land-use after deforestation at a small-scale via human visual interpretation of high resolution RGB imagery. It supports the usage of fast and automated large-scale land-use classification and showcases the value of deep learning methods combined with spatio-temporal satellite data to effectively address the complex tasks of identifying land-use following deforestation in a scalable and cost effective manner.

@article{MasoleleDHMVGMM2021SpatialAnd, author = {Masolele, Robert N. and {De Sy}, Veronique and Herold, Martin and Marcos, Diego and Verbesselt, Jan and Gieseke, Fabian and Mullissa, Adugna G. and Martius, Christopher}, title = {Spatial and temporal deep learning methods for deriving land-use following deforestation: A pan-tropical case study using Landsat time series}, journal = {Remote Sensing of Environment}, year = {2021}, volume = {264}, pages = {112600}, doi = {10.1016/j.rse.2021.112600}, tags = {application,rs}, } - Y. Dai, S. Oehmcke, F. Gieseke, Y. Wu, and K. BarnardICPR20 Proceedings of the 25th International Conference on Pattern Recognition (ICPR) 2020

@inproceedings{DaiOGWB2020AttentionAs, author = {Dai, Yimian and Oehmcke, Stefan and Gieseke, Fabian and Wu, Yiquan and Barnard, Kobus}, title = {Attention as Activation}, booktitle = {Proceedings of the 25th International Conference on Pattern Recognition (ICPR)}, pages = {9156--9163}, year = {2020}, publisher = {IEEE}, address = {Milan, Italy}, doi = {10.1109/ICPR48806.2021.9413020}, tags = {ml}, } - F. Gieseke, S. Rosca, T. Henriksen, J. Verbesselt, and C. E. OanceaICDE20 Proceedings of the 36th IEEE International Conference on Data Engineering (ICDE) 2020

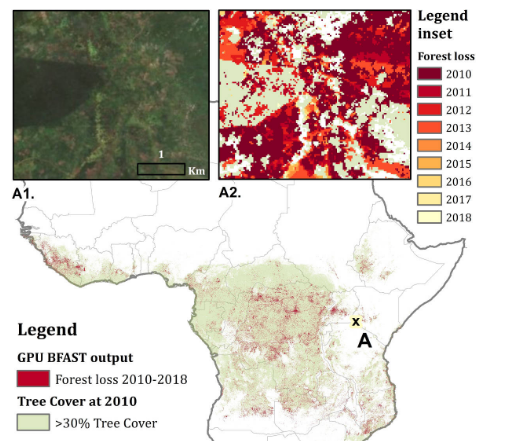

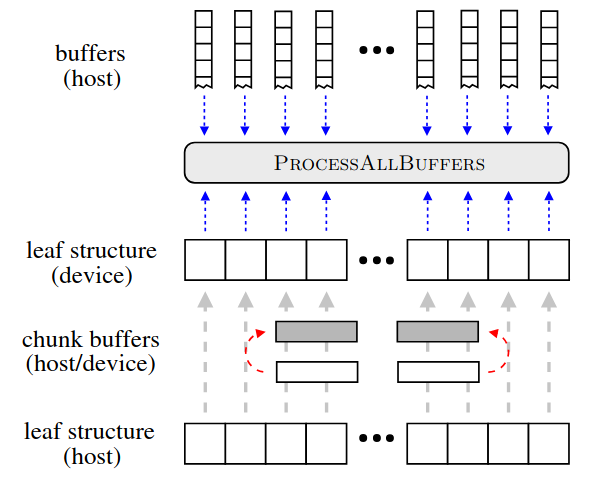

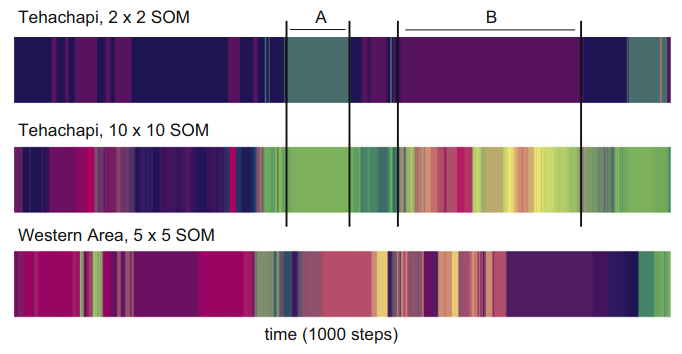

Large amounts of satellite data are now becoming available, which, in combination with appropriate change detection methods, offer the opportunity to derive accurate information on timing and location of disturbances such as deforestation events across the earth surface. Typical scenarios require the analysis of billions of image patches/pixels. While various change detection techniques have been proposed in the literature, the associated implementations usually do not scale well, which renders the corresponding analyses computationally very expensive or even impossible. In this work, we propose a novel massively-parallel implementation for a state-of-the-art change detection method and demonstrate its potential in the context of monitoring deforestation. The novel implementation can handle large scenarios in a few hours or days using cheap commodity hardware, compared to weeks or even years using the existing publicly available code, and enables researchers, for the first time, to conduct global-scale analyses covering large parts of our Earth using little computational resources. From a technical perspective, we provide a high-level parallel algorithm specification along with several performance-critical optimizations dedicated to efficiently map the specified parallelism to modern parallel devices. While a particular change detection method is addressed in this work, the algorithmic building blocks provided are also of immediate relevance to a wide variety of related approaches in remote sensing and other fields.

@inproceedings{GiesekeRHVO2020MassivelyParallel, author = {Gieseke, Fabian and Rosca, Sabina and Henriksen, Troels and Verbesselt, Jan and Oancea, Cosmin Eugen}, title = {Massively-Parallel Change Detection for Satellite Time Series Data with Missing Values}, booktitle = {Proceedings of the 36th {IEEE} International Conference on Data Engineering (ICDE)}, pages = {385--396}, year = {2020}, address = {Dallas, USA}, doi = {10.1109/ICDE48307.2020.00040}, tags = {de,rs}, } - C. E. Oancea, T. Robroek, and F. GiesekeIEEE BIGDATA 2020 2020 IEEE International Conference on Big Data 2020

Nearest neighbour fields accurately and intuitively describe the transformation between two images and have been heavily used in computer vision. Generating such fields, however, is not an easy task due to the induced computational complexity, which quickly grows with the sizes of the images. Modern parallel devices such as graphics processing units depict a viable way of reducing the practical run time of such compute-intensive tasks. In this work, we propose a novel parallel implementation for one of the state-of-the-art methods for the computation of nearest neighbour fields, called p ropagation-assisted k -d trees. The resulting implementation yields valuable computational savings over a corresponding multi-core implementation. Additionally, it is tuned to consume only little additional memory and is, hence, capable of dealing with high-resolution image data, which is vital as image quality standards keep rising.

@inproceedings{OanceaRG2020Approximate, author = {Oancea, Cosmin Eugen and Robroek, Ties and Gieseke, Fabian}, title = {Approximate Nearest-Neighbour Fields via Massively-Parallel Propagation-Assisted K-D Trees}, booktitle = {2020 {IEEE} International Conference on Big Data}, pages = {5172--5181}, year = {2020}, publisher = {IEEE}, doi = {10.1109/BigData50022.2020.9378426}, tags = {de,ml}, } - S. Oehmcke, T. K. Chen, A. V. Prishchepov, and F. GiesekeBIGSPATIAL20 Proceedings of the 9th ACM SIGSPATIAL International Workshop on Analytics for Big Geospatial Data 2020

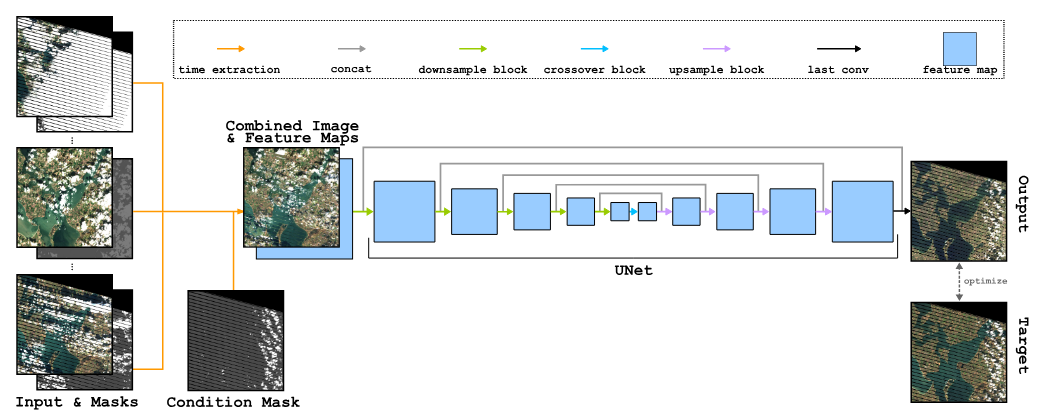

Optical satellite images are important for environmental monitoring. Unfortunately, such images are often affected by distortions, such as clouds, shadows, or missing data. This work proposes a deep learning approach for cleaning and imputing satellite images, which could serve as a reliable preprocessing step for spatial and spatio-temporal analyzes. More specifically, a coherent and cloud-free image for a specific target date and region is created based on a sequence of images of that region obtained at previous dates. Our model first extracts information from the previous time steps via a special gating function and then resorts to a modified version of the well-known U-Net architecture to obtain the desired output image. The model uses supplementary data, namely the approximate cloud coverage of input images, the temporal distance to the target time, and a missing data mask for each input time step. During the training phase we condition our model with the targets cloud coverage and missing values (disabled in production), which allows us to use data afflicted by distortion during training and thus does not require pre-selection of distortion-free data. Our experimental evaluation, conducted on data of the Landsat missions, shows that our approach outperforms the commonly utilized approach that resorts to taking the median of cloud-free pixels for a given position. This is especially the case when the quality of the data for the considered period is poor (e.g., lack of cloud free-images during the winter/fall periods). Our deep learning approach allows to improve the utility of the entire Landsat archive, the only existing global medium-resolution free-access satellite archive dating back to the 1970s. It therefore holds scientific and societal potential for future analyses conducted on data from this and other satellite imagery repositories.

@inproceedings{OehmckeTHPG2020CreatingCloudFree, author = {Oehmcke, Stefan and Chen, Tzu-Hsin Karen and Prishchepov, Alexander V. and Gieseke, Fabian}, title = {Creating Cloud-Free Satellite Imagery from Image Time Series with Deep Learning}, booktitle = {Proceedings of the 9th ACM SIGSPATIAL International Workshop on Analytics for Big Geospatial Data}, pages = {3:1-3:10}, year = {2020}, publisher = {ACM}, address = {Seattle, USA}, doi = {10.1145/3423336.3429345}, tags = {ml,de,rs,application}, } - M. Brandt, C. Tucker, A. Kariryaa, K. Rasmussen, C. Abel, J. Small, J. Chave, L. Rasmussen, P. Hiernaux, A. Diouf, L. Kergoat, O. Mertz, C. Igel, F. Gieseke, J. Schöning, S. Li, K. Melocik, J. Meyer, SinnoS, E. Romero, E. Glennie, A. Montagu, M. Dendoncker, and R. FensholtJOURNALNature 2020

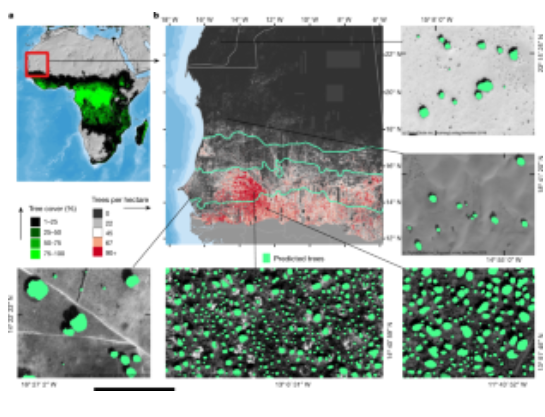

A large proportion of dryland trees and shrubs (hereafter referred to collectively as trees) grow in isolation, without canopy closure. These non-forest trees have a crucial role in biodiversity, and provide ecosystem services such as carbon storage, food resources and shelter for humans and animals. However, most public interest relating to trees is devoted to forests, and trees outside of forests are not well-documented. Here we map the crown size of each tree more than 3 m2 in size over a land area that spans 1.3 million km2 in the West African Sahara, Sahel and sub-humid zone, using submetre-resolution satellite imagery and deep learning. We detected over 1.8 billion individual trees (13.4 trees per hectare), with a median crown size of 12 m2, along a rainfall gradient from 0 to 1,000 mm per year. The canopy cover increases from 0.1% (0.7 trees per hectare) in hyper-arid areas, through 1.6% (9.9 trees per hectare) in arid and 5.6% (30.1 trees per hectare) in semi-arid zones, to 13.3% (47 trees per hectare) in sub-humid areas. Although the overall canopy cover is low, the relatively high density of isolated trees challenges prevailing narratives about dryland desertification, and even the desert shows a surprisingly high tree density. Our assessment suggests a way to monitor trees outside of forests globally, and to explore their role in mitigating degradation, climate change and poverty.

@article{BrandtTKRASCRHDKMIGSLMMSRGMDF2020AnUnexpectedly, author = {Brandt, M and Tucker, C and Kariryaa, A and Rasmussen, K and Abel, C and Small, J and Chave, J and Rasmussen, L and Hiernaux, P and Diouf, A and Kergoat, L and Mertz, O and Igel, C and Gieseke, F and Schöning, J and Li, S and Melocik, K and Meyer, J and SinnoS and Romero, E and Glennie, E and Montagu, A and Dendoncker, M and Fensholt, R}, title = {An unexpectedly large count of trees in the West African Sahara and Sahel}, journal = {Nature}, year = {2020}, volume = {2020}, doi = {10.1038/s41586-020-2824-5}, tags = {application,rs,ml} } - E. Hamunyela, S. Rosca, A. Mirt, E. Engle, M. Herold, F. Gieseke, and J. Verbesselt.JOURNALRemote Sensing 2020

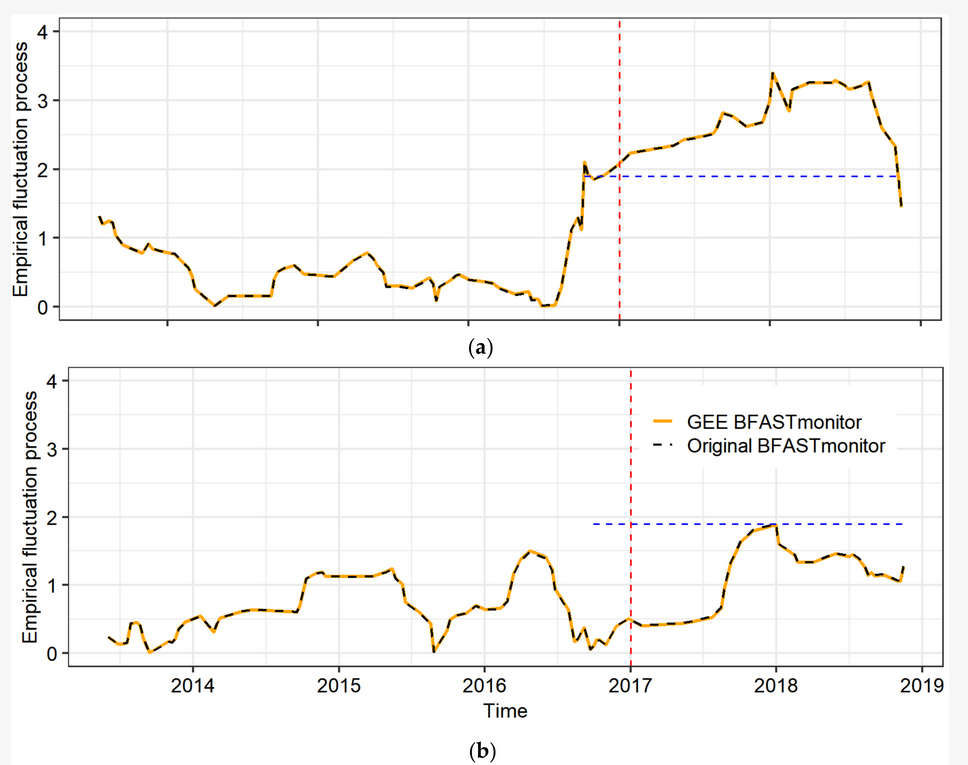

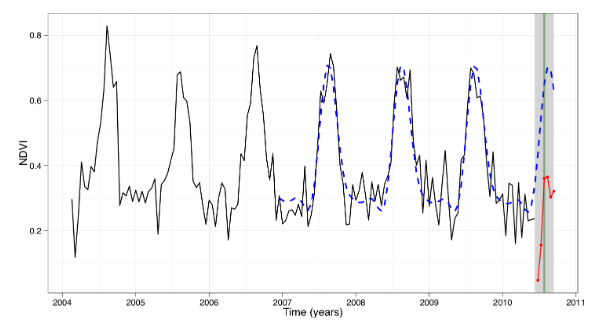

Monitoring of abnormal changes on the earth’s surface (e.g., forest disturbance) has improved greatly in recent years because of satellite remote sensing. However, high computational costs inherently associated with processing and analysis of satellite data often inhibit large-area and sub-annual monitoring. Normal seasonal variations also complicate the detection of abnormal changes at sub-annual scale in the time series of satellite data. Recently, however, computationally powerful platforms, such as the Google Earth Engine (GEE), have been launched to support large-area analysis of satellite data. Change detection methods with the capability to detect abnormal changes in time series data while accounting for normal seasonal variations have also been developed but are computationally intensive. Here, we report an implementation of BFASTmonitor (Breaks For Additive Season and Trend monitor) on GEE to support large-area and sub-annual change monitoring using satellite data available in GEE. BFASTmonitor is a data-driven unsupervised change monitoring approach that detects abnormal changes in time series data, with near real-time monitoring capabilities. Although BFASTmonitor has been widely used in forest cover loss monitoring, it is a generic change monitoring approach that can be used to monitor changes in a various time series data. Using Landsat time series for normalised difference moisture index (NDMI), we evaluated the performance of our GEE BFASTmonitor implementation (GEE BFASTmonitor) by detecting forest disturbance at three forest areas (humid tropical forest, dry tropical forest, and miombo woodland) while comparing it to the original R-based BFASTmonitor implementation (original BFASTmonitor). A map-to-map comparison showed that the spatial and temporal agreements on forest disturbance between the original and our GEE BFASTmonitor implementations were high. At each site, the spatial agreement was more than 97%, whereas the temporal agreement was over 94%. The high spatial and temporal agreement show that we have properly translated and implemented the BFASTmonitor algorithm on GEE. Naturally, due to different numerical solvers being used for regression model fitting in R and GEE, small differences could be observed in the outputs. These differences were most noticeable at the dry tropical forest and miombo woodland sites, where the forest exhibits strong seasonality. To make GEE BFASTmonitor accessible to non-technical users, we developed a web application with simplified user interface. We also created a JavaScript-based GEE BFASTmonitor package that can be imported as a module. Overall, our GEE BFASTmonitor implementation fills an important gap in large-area environmental change monitoring using earth observation data.

@article{HamunyelaRMEHGV2020Implementation, author = {Hamunyela, Eliakim and Rosca, Sabina and Mirt, Andrei and Engle, Eric and Herold, Martin and Gieseke, Fabian and Verbesselt., Jan}, title = {Implementation of BFASTmonitor Algorithm on Google Earth Engine to Support Large-Area and Sub-Annual Change Monitoring Using Earth Observation Data}, journal = {Remote Sensing}, year = {2020}, volume = {12}, number = {18}, doi = {10.3390/rs12182953}, tags = {application,rs} } - V. Ko, S. Oehmcke, and F. GiesekeIEEE BIGDATA 2019 2019 IEEE International Conference on Big Data (IEEE BigData) 2019

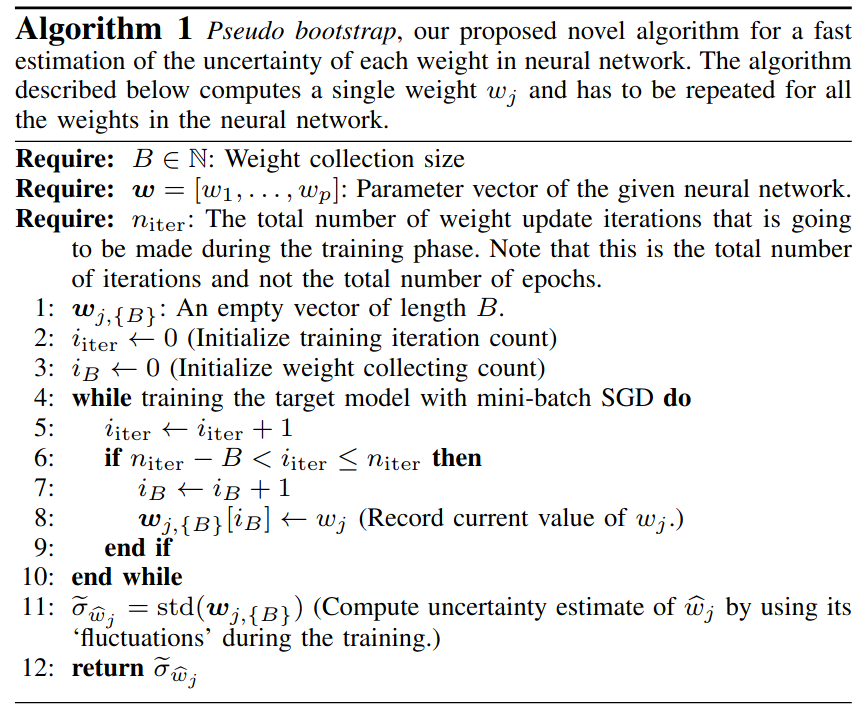

Neural networks have achieved dramatic improvements in recent years and depict the state-of-the-art methods for many real-world tasks nowadays. One drawback is, however, that many of these models are overparameterized, which makes them both computationally and memory intensive. Furthermore, overparameterization can also lead to undesired overfitting side-effects. Inspired by recently proposed magnitude-based pruning schemes and the Wald test from the field of statistics, we introduce a novel magnitude and uncertainty (M&U) pruning criterion that helps to lessen such shortcomings. One important advantage of our M&U pruning criterion is that it is scale-invariant, a phenomenon that the magnitude-based pruning criterion suffers from. In addition, we present a “pseudo bootstrap” scheme, which can efficiently estimate the uncertainty of the weights by using their update information during training. Our experimental evaluation, which is based on various neural network architectures and datasets, shows that our new criterion leads to more compressed models compared to models that are solely based on magnitude-based pruning criteria, with, at the same time, less loss in predictive power.

@inproceedings{KoOG2019MagnitudeAnd, author = {Ko, Vinnie and Oehmcke, Stefan and Gieseke, Fabian}, title = {Magnitude and Uncertainty Pruning Criterion for Neural Networks}, booktitle = {2019 {IEEE} International Conference on Big Data {(IEEE} BigData)}, pages = {2317--2326}, year = {2019}, publisher = {IEEE}, address = {Los Angeles, USA}, doi = {10.1109/BigData47090.2019.9005692}, url = {https://doi.org/10.1109/BigData47090.2019.9005692}, tags = {ml}, } - S. Oehmcke, C. Thrysøe, A. Borgstad, M. V. Salles, M. Brandt, and F. GiesekeIEEE BIGDATA 2019 2019 IEEE International Conference on Big Data (IEEE BigData) 2019

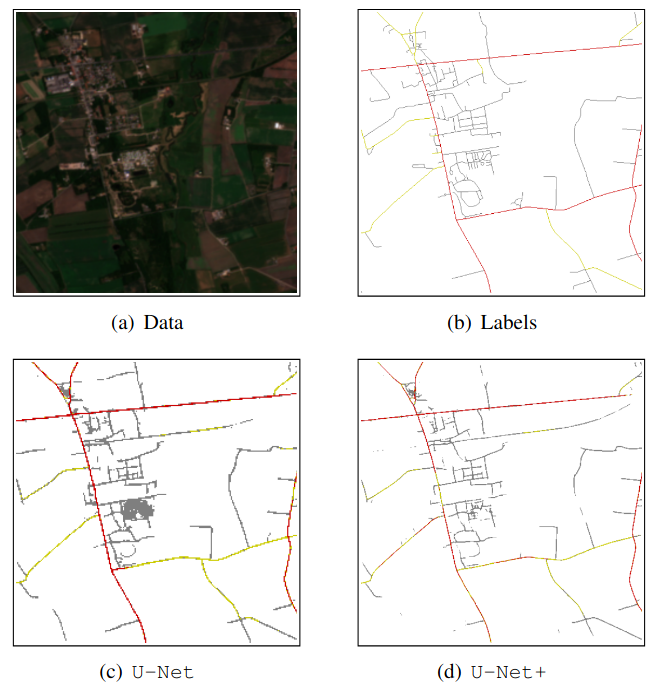

Massive amounts of satellite data have been gathered over time, holding the potential to unveil a spatiotemporal chronicle of the surface of Earth. These data allow scientists to investigate various important issues, such as land use changes, on a global scale. However, not all land-use phenomena are equally visible on satellite imagery. In particular, the creation of an inventory of the planet’s road infrastructure remains a challenge, despite being crucial to analyze urbanization patterns and their impact. Towards this end, this work advances data-driven approaches for the automatic identification of roads based on open satellite data. Given the typical resolutions of these historical satellite data, we observe that there is inherent variation in the visibility of different road types. Based on this observation, we propose two deep learning frameworks that extend state-of-the-art deep learning methods by formalizing road detection as an ordinal classification task. In contrast to related schemes, one of the two models also resorts to satellite time series data that are potentially affected by missing data and cloud occlusion. Taking these time series data into account eliminates the need to manually curate datasets of high-quality image tiles, substantially simplifying the application of such models on a global scale. We evaluate our approaches on a dataset that is based on Sentinel 2 satellite imagery and OpenStreetMap vector data. Our results indicate that the proposed models can successfully identify large and medium-sized roads. We also discuss opportunities and challenges related to the detection of roads and other infrastructure on a global scale.

@inproceedings{OehmckeTBMBG2019DetectingHardly, author = {Oehmcke, Stefan and Thrysøe, Christoph and Borgstad, Andreas and Salles, Marcos Vaz and Brandt, Martin and Gieseke, Fabian}, title = {Detecting Hardly Visible Roads in Low-Resolution Satellite Time Series Data}, booktitle = {2019 {IEEE} International Conference on Big Data {(IEEE} BigData)}, pages = {2403--2412}, year = {2019}, publisher = {IEEE}, doi = {10.1109/BigData47090.2019.9006251}, tags = {ml,application,rs}, } - F. Gieseke, and C. IgelKDD18 Proceedings of the 24th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining, KDD 2018, London, UK, August 19-23, 2018 2018

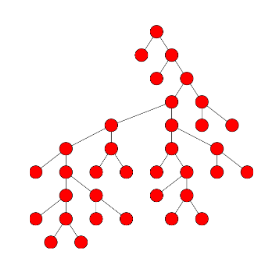

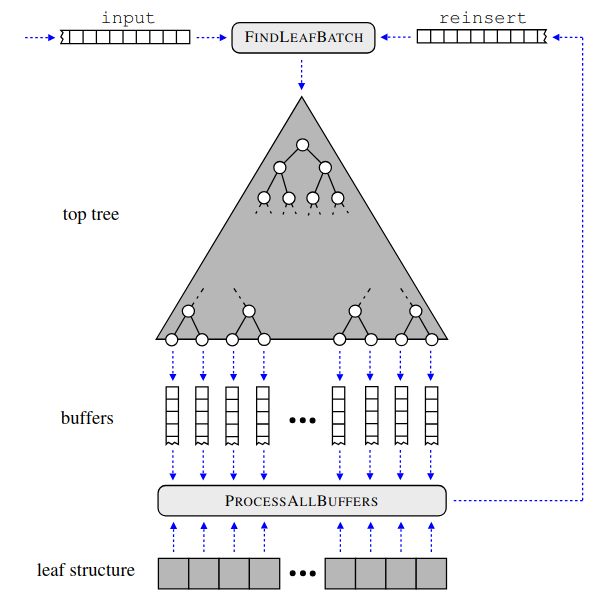

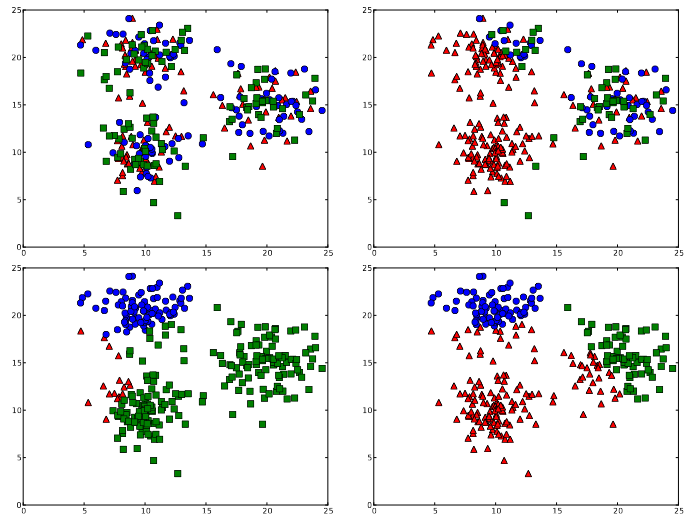

Without access to large compute clusters, building random forests on large datasets is still a challenging problem. This is, in particular, the case if fully-grown trees are desired. We propose a simple yet effective framework that allows to efficiently construct ensembles of huge trees for hundreds of millions or even billions of training instances using a cheap desktop computer with commodity hardware. The basic idea is to consider a multi-level construction scheme, which builds top trees for small random subsets of the available data and which subsequently distributes all training instances to the top trees’ leaves for further processing. While being conceptually simple, the overall efficiency crucially depends on the particular implementation of the different phases. The practical merits of our approach are demonstrated using dense datasets with hundreds of millions of training instances.

@inproceedings{GiesekeI2018TrainingBig, author = {Gieseke, Fabian and Igel, Christian}, title = {Training Big Random Forests with Little Resources}, booktitle = {Proceedings of the 24th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining, KDD 2018, London, UK, August 19-23, 2018}, pages = {1445--1454}, year = {2018}, publisher = {ACM}, doi = {10.1145/3219819.3220124}, tags = {ml,de}, } - F. Gieseke, C. E. Oancea, A. Mahabal, C. Igel, and T. HeskesHigh Performance Computing for Computational Science — VECPAR 2018 — 13th International Conference, São Pedro, Brazil, September 17-19, 2018, Revised Selected Papers 2018

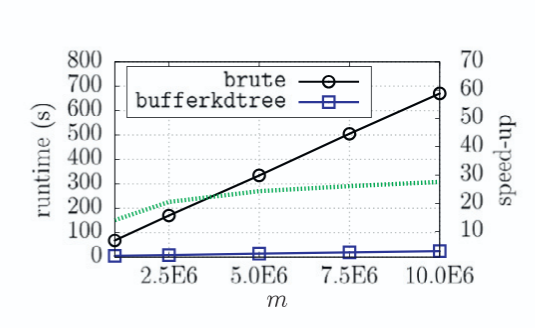

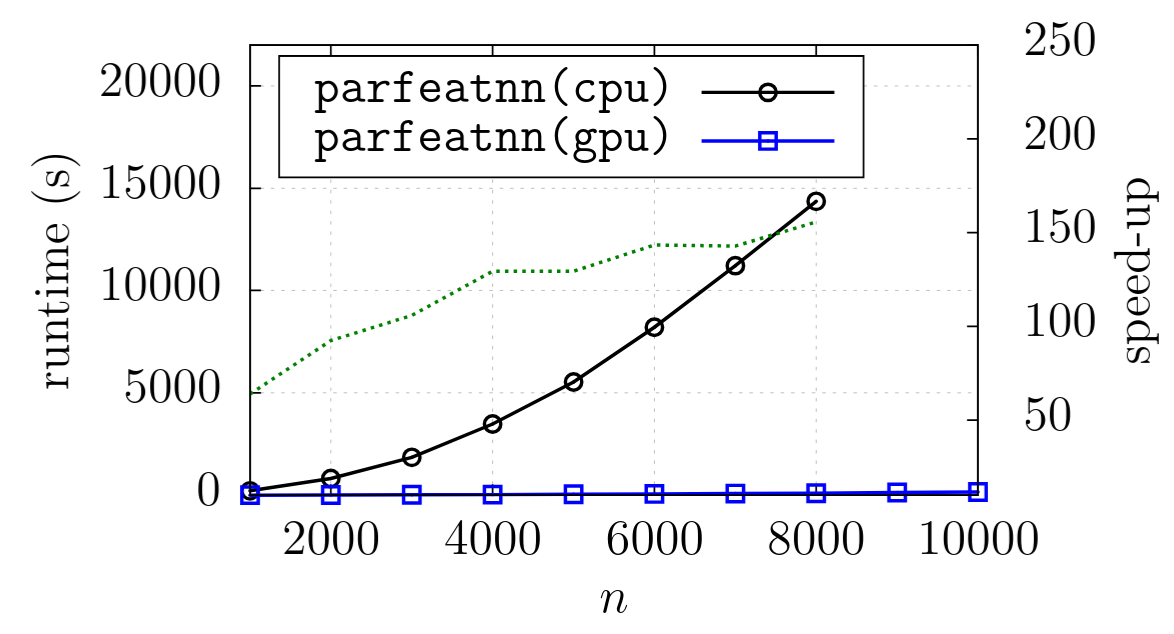

A buffer k-d tree is a k-d tree variant for massively-parallel nearest neighbor search. While providing valuable speed-ups on modern many-core devices in case both a large number of reference and query points are given, buffer k-d trees are limited by the amount of points that can fit on a single device. In this work, we show how to modify the original data structure and the associated workflow to make the overall approach capable of dealing with massive data sets. We further provide a simple yet efficient way of using multiple devices given in a single workstation. The applicability of the modified framework is demonstrated in the context of astronomy, a field that is faced with huge amounts of data.

@inproceedings{GiesekeOMIH2018BiggerBuffer, author = {Gieseke, Fabian and Oancea, Cosmin Eugen and Mahabal, Ashish and Igel, Christian and Heskes, Tom}, title = {Bigger Buffer k-d Trees on Multi-Many-Core Systems}, booktitle = {High Performance Computing for Computational Science --- VECPAR 2018 --- 13th International Conference, São Pedro, Brazil, September 17-19, 2018, Revised Selected Papers}, pages = {202--214}, series = {Lecture Notes in Computer Science}, volume = {11333}, year = {2018}, publisher = {Springer}, doi = {10.1007/978-3-030-15996-2\_15}, tags = {de}, } - M. Mehren, F. Gieseke, J. Verbesselt, S. Rosca, S. Horion, and A. ZeileisProceedings of the 30th International Conference on Scientific and Statistical Database Management, SSDBM 2018, Bozen-Bolzano, Italy, July 09-11, 2018 2018

The field of remote sensing is nowadays faced with huge amounts of data. While this offers a variety of exciting research opportunities, it also yields significant challenges regarding both computation time and space requirements. In practice, the sheer data volumes render existing approaches too slow for processing and analyzing all the available data. This work aims at accelerating BFAST, one of the state-of-the-art methods for break detection given satellite image time series. In particular, we propose a massively-parallel implementation for BFAST that can effectively make use of modern parallel compute devices such as GPUs. Our experimental evaluation shows that the proposed GPU implementation is up to four orders of magnitude faster than the existing publicly available implementation and up to ten times faster than a corresponding multi-threaded CPU execution. The dramatic decrease in running time renders the analysis of significantly larger datasets possible in seconds or minutes instead of hours or days. We demonstrate the practical benefits of our implementations given both artificial and real datasets.

@inproceedings{MehrenGVRHZ2018MassivelyParallel, author = {Mehren, Malte and Gieseke, Fabian and Verbesselt, Jan and Rosca, Sabine and Horion, Stefanie and Zeileis, Achim}, title = {Massively-parallel break detection for satellite data}, booktitle = {Proceedings of the 30th International Conference on Scientific and Statistical Database Management, SSDBM 2018, Bozen-Bolzano, Italy, July 09-11, 2018}, pages = {5:1-5:10}, year = {2018}, publisher = {ACM}, doi = {10.1145/3221269.3223032}, tags = {de}, } - A. D’Isanto, S. Cavuoti, F. Gieseke, and K. L. PolstererAstronomy & Astrophysics 2018

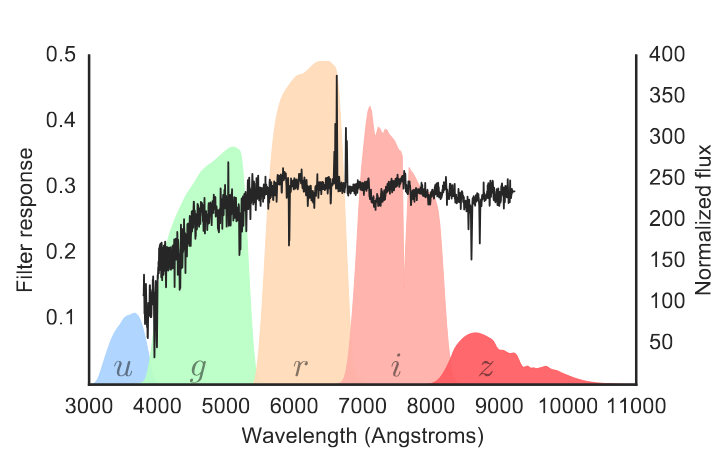

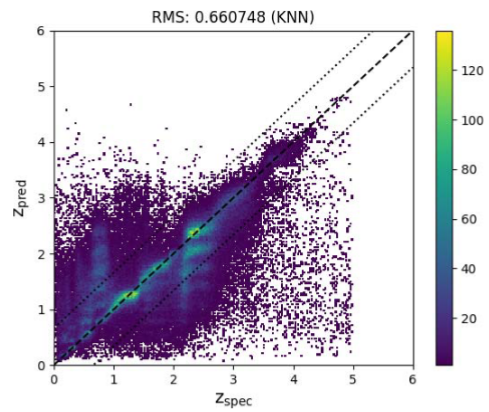

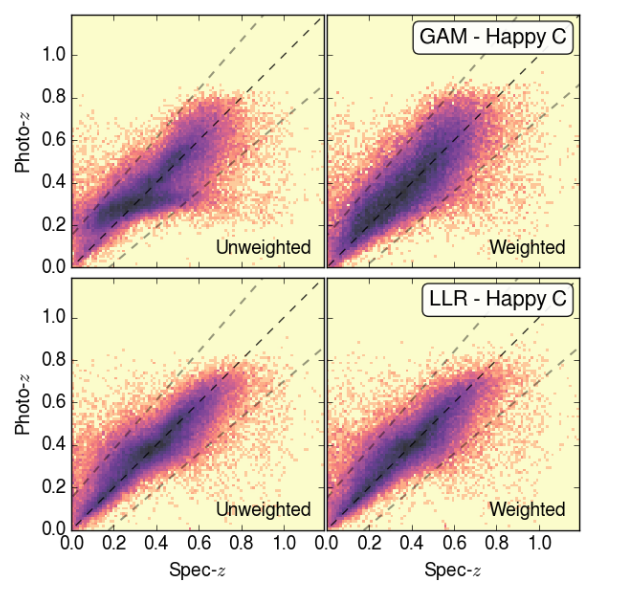

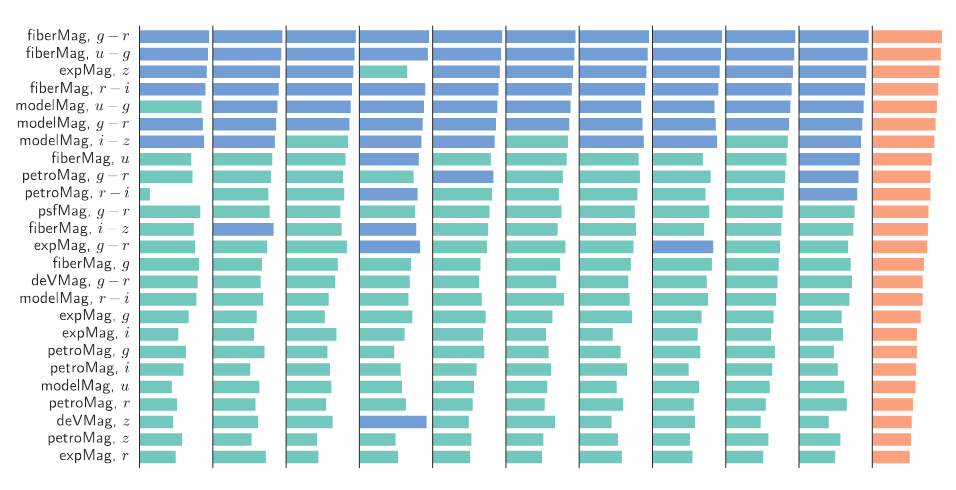

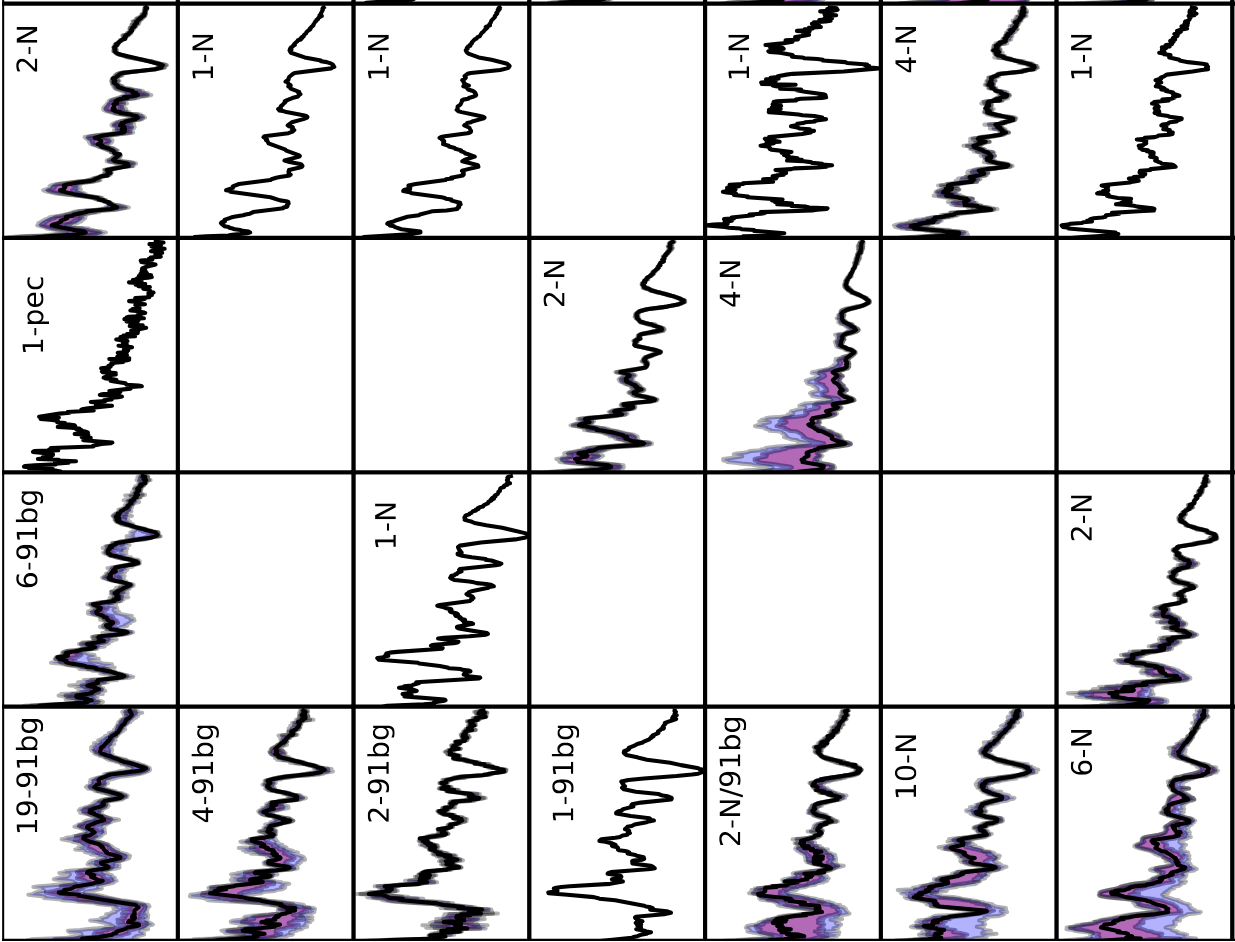

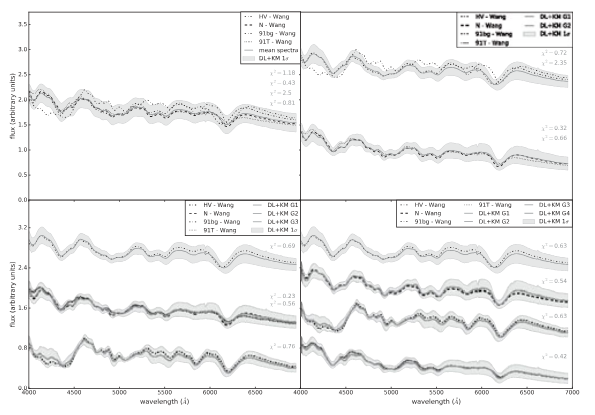

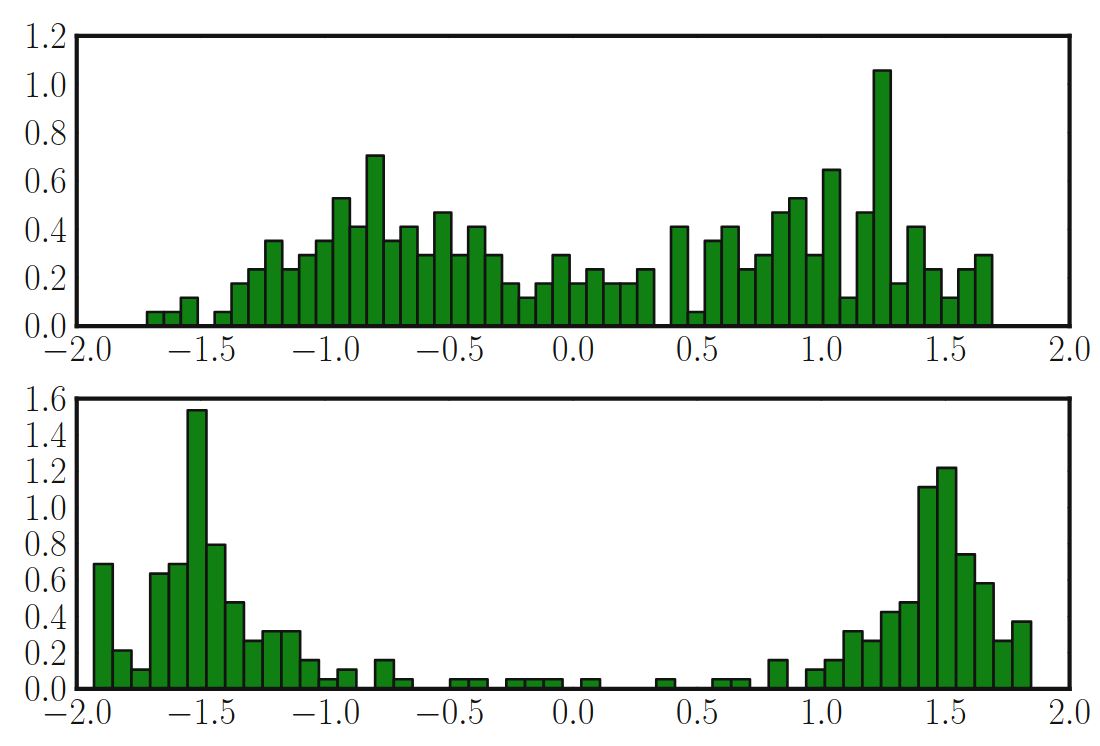

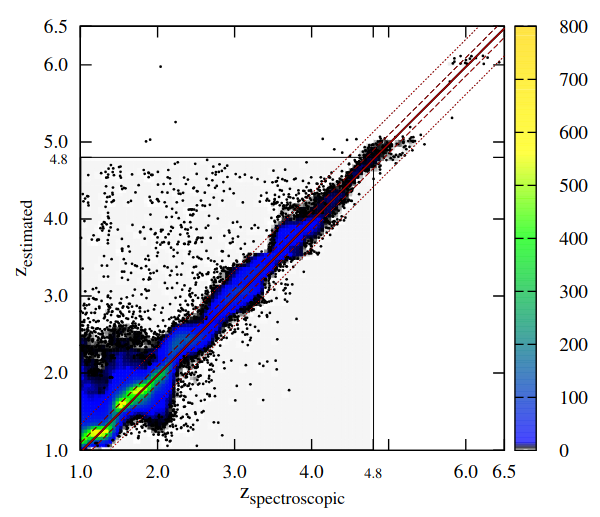

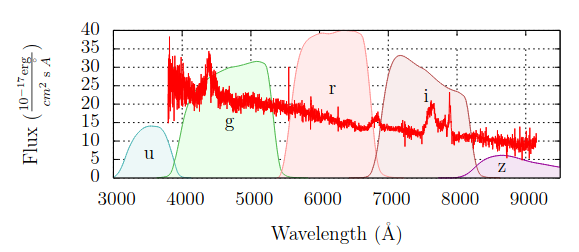

The explosion of data in recent years has generated an increasing need for new analysis techniques in order to extract knowledge from massive datasets. Machine learning has proved particularly useful to perform this task. Fully automatized methods have recently gathered great popularity, even though those methods often lack physical interpretability. In contrast, feature based approaches can provide both well-performing models and understandable causalities with respect to the correlations found between features and physical processes. Efficient feature selection is an essential tool to boost the performance of machine learning models. In this work, we propose a forward selection method in order to compute, evaluate, and characterize better performing features for regression and classification problems. Given the importance of photometric redshift estimation, we adopt it as our case study. We synthetically created 4,520 features by combining magnitudes, errors, radii, and ellipticities of quasars, taken from the SDSS. We apply a forward selection process, a recursive method in which a huge number of feature sets is tested through a kNN algorithm, leading to a tree of feature sets. The branches of the tree are then used to perform experiments with the random forest, in order to validate the best set with an alternative model. We demonstrate that the sets of features determined with our approach improve the performances of the regression models significantly when compared to the performance of the classic features from the literature. The found features are unexpected and surprising, being very different from the classic features. Therefore, a method to interpret some of the found features in a physical context is presented. The methodology described here is very general and can be used to improve the performance of machine learning models for any regression or classification task.

@article{DIsantoCGP2018ReturnOfThe, author = {D'Isanto, Antonio and Cavuoti, Stefano and Gieseke, Fabian and Polsterer, Kai Lars}, title = {Return of the features --- Efficient feature selection and interpretation for photometric redshifts}, journal = {Astronomy & Astrophysics}, year = {2018}, volume = {616}, pages = {A97}, doi = {10.1051/0004-6361/201833103}, tags = {application}, } - C. Florea, and F. GiesekeJournal of Visual Communication and Image Representation 2018

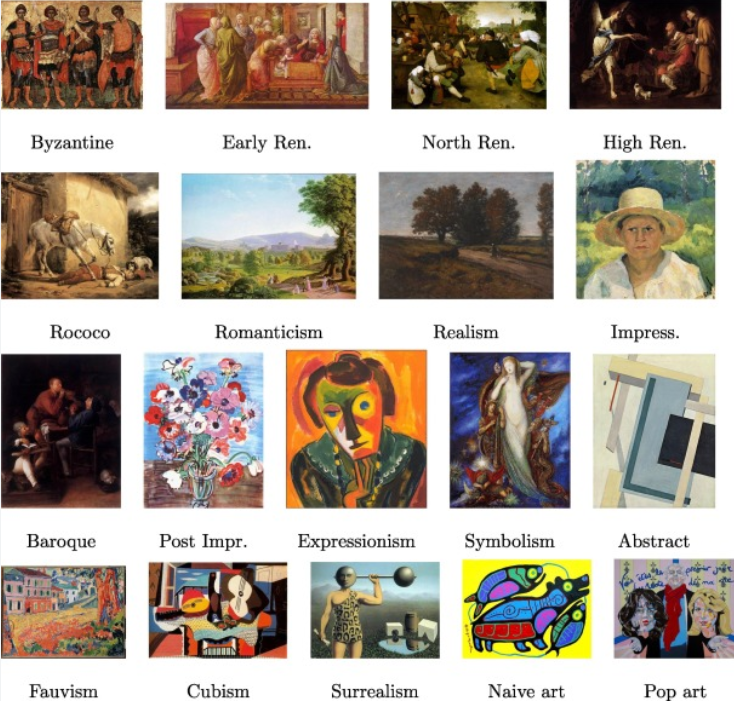

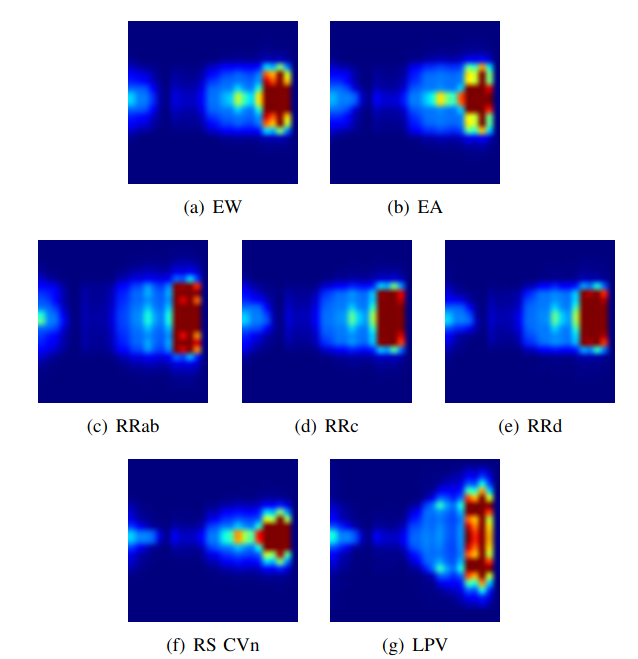

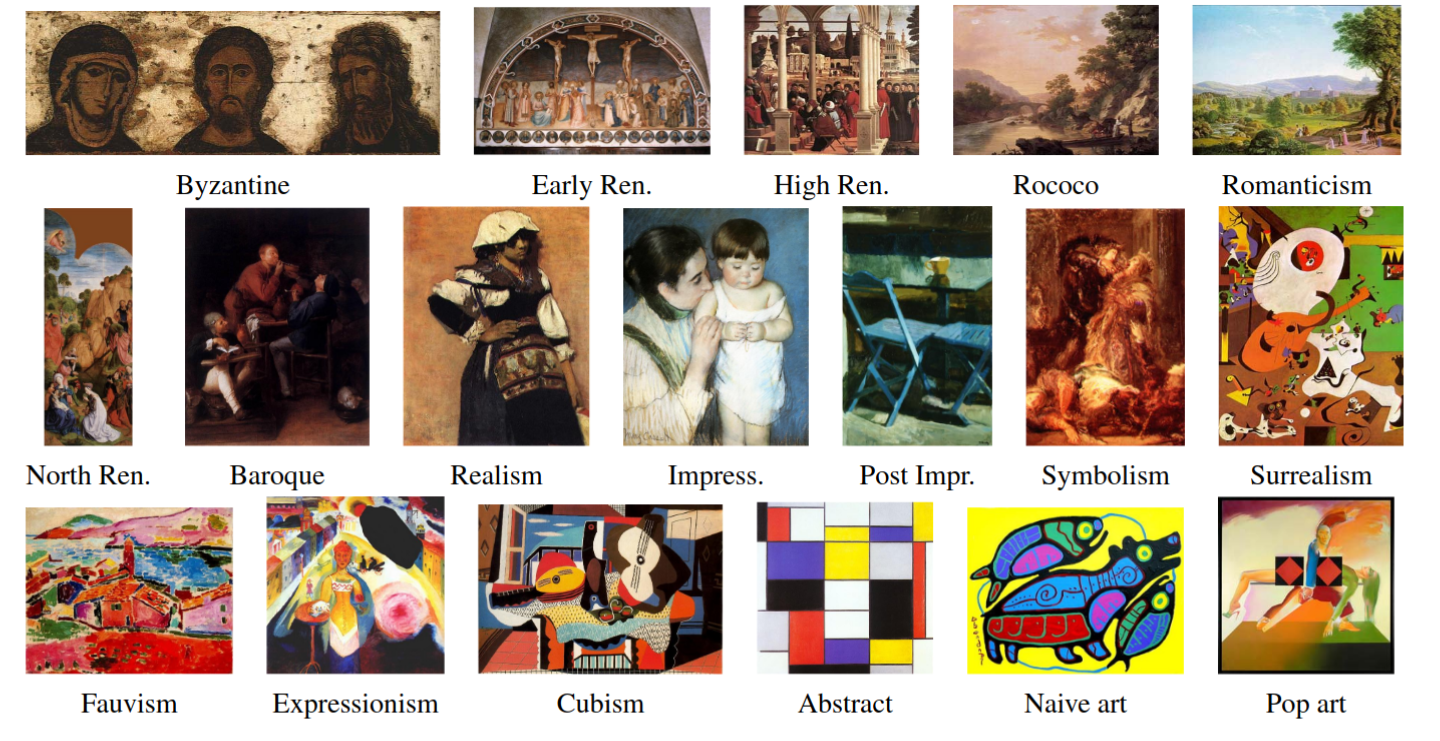

In this work we aim to automatically recognize the artistic movement from a digitized image of a painting. Our approach uses a new system that resorts to descriptions induced by color structure histograms and by novel topographical features for texture assessment. The topographical descriptors accumulate information from the first and second local derivatives within four layers of finer representations. The classification is performed by two layers of ensembles. The first is an adapted boosted ensemble of support vector machines, which introduces further randomization over feature categories as a regularization. The training of the ensemble yields individual experts by isolating initially misclassified images and by correcting them in further stages of the process. The solution improves the performance by a second layer build upon the consensus of multiple local experts that analyze different parts of the images. The resulting performance compares favorably with classical solutions and manages to match the ones of modern deep learning frameworks.

@article{FloreaG2018ArtisticMovement, author = {Florea, Corneliu and Gieseke, Fabian}, title = {Artistic movement recognition by consensus of boosted SVM based experts}, journal = {Journal of Visual Communication and Image Representation}, year = {2018}, volume = {56}, pages = {220--233}, doi = {10.1016/j.jvcir.2018.09.015}, tags = {application}, } - F. Gieseke, S. Bloemen, C. Bogaard, T. Heskes, J. Kindler, R. A. Scalzo, V. A. Ribeiro, J. Roestel, P. J. Groot, F. Yuan, A. Möller, and B. E. TuckerJOURNALMonthly Notices of the Royal Astronomical Society (MNRAS) 2017

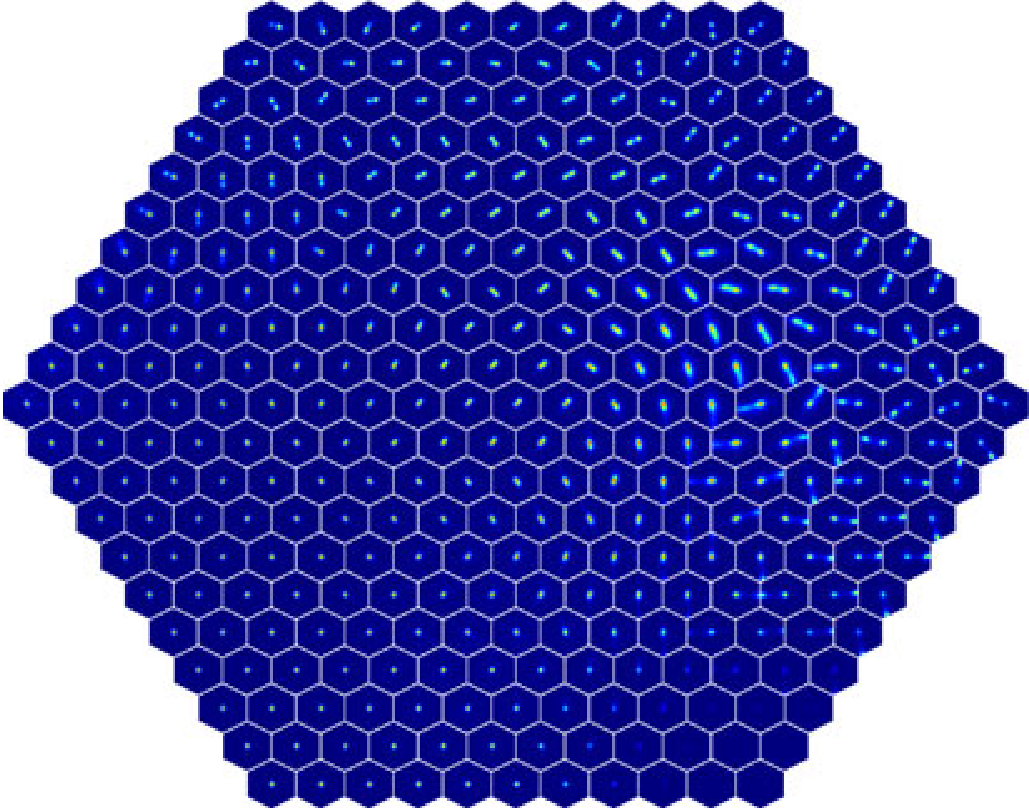

Current synoptic sky surveys monitor large areas of the sky to find variable and transient astronomical sources. As the number of detections per night at a single telescope easily exceeds several thousand, current detection pipelines make intensive use of machine learning algorithms to classify the detected objects and to filter out the most interesting candidates. A number of upcoming surveys will produce up to three orders of magnitude more data, which renders high-precision classification systems essential to reduce the manual and, hence, expensive vetting by human experts. We present an approach based on convolutional neural networks to discriminate between true astrophysical sources and artefacts in reference-subtracted optical images. We show that relatively simple networks are already competitive with state-of-the-art systems and that their quality can further be improved via slightly deeper networks and additional pre-processing steps – eventually yielding models outperforming state-of-the-art systems. In particular, our best model correctly classifies about 97.3 per cent of all ‘real’ and 99.7 per cent of all ‘bogus’ instances on a test set containing 1942 ‘bogus’ and 227 ‘real’ instances in total. Furthermore, the networks considered in this work can also successfully classify these objects at hand without relying on difference images, which might pave the way for future detection pipelines not containing image subtraction steps at all.

@article{GiesekeEtAl2017, author = {Gieseke, Fabian and Bloemen, Steven and van den Bogaard, Cas and Heskes, Tom and Kindler, Jonas and Scalzo, Richard A. and Ribeiro, Valerio A.R.M. and van Roestel, Jan and Groot, Paul J. and Yuan, Fang and Möller, Anais and Tucker, Brad E.}, title = {Convolutional Neural Networks for Transient Candidate Vetting in Large-Scale Surveys}, journal = {Monthly Notices of the Royal Astronomical Society {(MNRAS)}}, year = {2017}, pages = {3101-3114}, volume = {472}, number = {3}, publisher = {Oxford University Press}, tags = {application,ml}, } - R. S. Souza, M. L. L. Dantas, M. V. Costa-Duarte, E. D. Feigelson, M. Killedar, P. Lablanche, R. Vilalta, A. Krone-Martins, R. Beck, and F. GiesekeMonthly Notices of the Royal Astronomical Society (MNRAS) 2017